The problem is that LIDAR what Audi uses in their adaptive cruise control systems, is of a much lower resolution than the 360º spinning LIDAR setups in Waymo cars.

They're not the same.

So they don't influence each other on volume for mass market use.

Bottom line is that LIDAR does not give context, only distance. Camera don't give context either, but with CV you can create context.

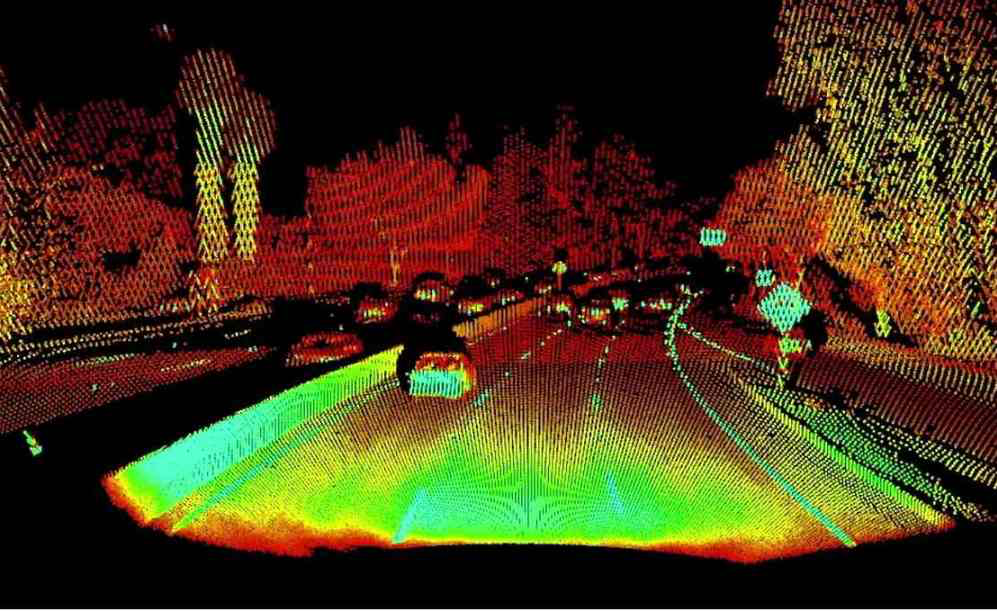

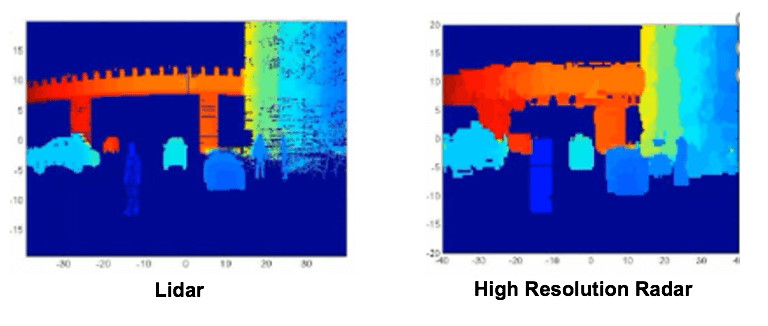

What I'm saying is: this is LIDAR you wish:

And based on vague shapes, you can recognise a wall and a car on the left, and something that looks like a sign on the right. And vehicles up ahead. You can also recognise trees, but you cannot read what's on the sign. You need camera's for that.

And based on vague shapes, you can recognise a wall and a car on the left, and something that looks like a sign on the right. And vehicles up ahead. You can also recognise trees, but you cannot read what's on the sign. You need camera's for that.

But if you use a camera, you can recognise all those items too. So what advantage does LIDAR give?

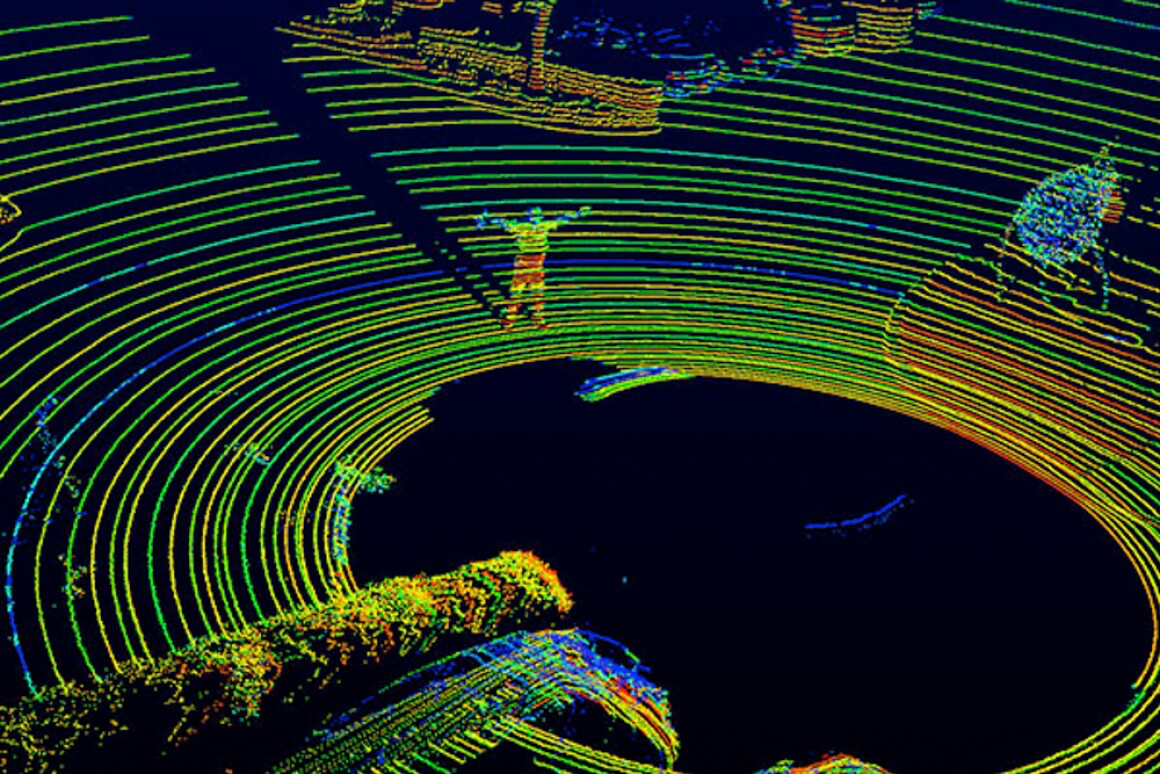

Here's another example:

You can recognise a person, but that's about tit For the rest, you have no context where you are.

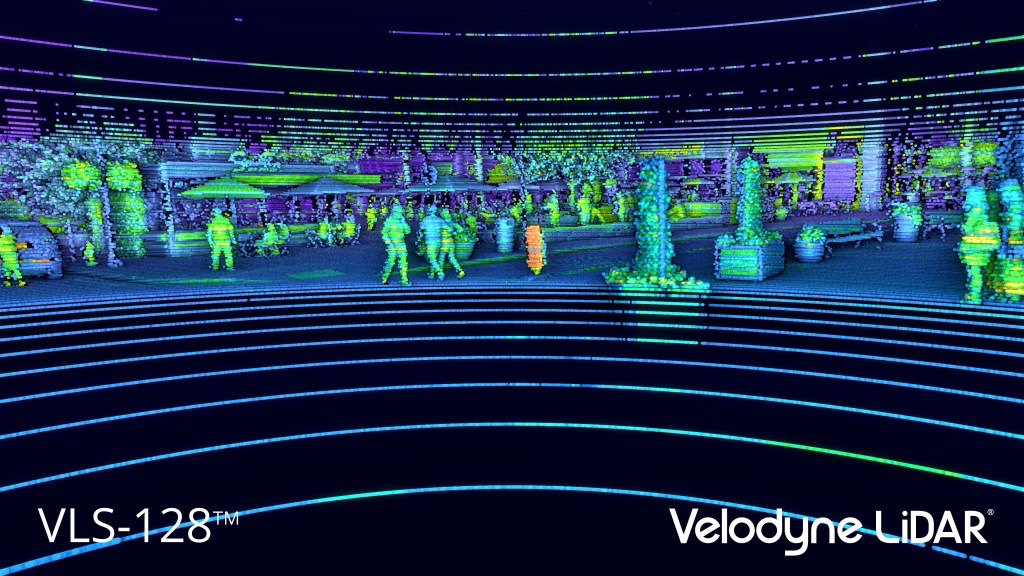

Velodyne's own system gives this result:

Again, you can recognise people, but that's because we make a recognition of the low resolution shape and try to build context. In effect, our mind is reconstructing context based on vision, not on the 3D point clouds.

LIDAR works on the principle that a laser beam will shine infrared light on an object, and the reflection is captured by the sensor. It then determines the distance of the reflecting surface by calculating how long the infrared laser light was travelling, using the speed of light.

Camera's don't bother with sending out infrared light. They just capture existing light and register them on their sensor. They lose distance data, but capture more colours. And with CV, you can recognise objects because you have a wider color gamut.

If you go at the level of front facing LIDAR applications for adaptive cruise control, like Audi's, you are looking at the following resolutions:

You could claim that you'd need LIDAR and CV to have a complete picture, except CV and LIDAR have so much different results, so you're just working to find a way to have both stacks recognise the same thing. And what will you do if both the LIDAR and CV stack don't recognise the same thing? Who will you trust?

They're not the same.

So they don't influence each other on volume for mass market use.

Bottom line is that LIDAR does not give context, only distance. Camera don't give context either, but with CV you can create context.

What I'm saying is: this is LIDAR you wish:

But if you use a camera, you can recognise all those items too. So what advantage does LIDAR give?

Here's another example:

You can recognise a person, but that's about tit For the rest, you have no context where you are.

Velodyne's own system gives this result:

Again, you can recognise people, but that's because we make a recognition of the low resolution shape and try to build context. In effect, our mind is reconstructing context based on vision, not on the 3D point clouds.

LIDAR works on the principle that a laser beam will shine infrared light on an object, and the reflection is captured by the sensor. It then determines the distance of the reflecting surface by calculating how long the infrared laser light was travelling, using the speed of light.

Camera's don't bother with sending out infrared light. They just capture existing light and register them on their sensor. They lose distance data, but capture more colours. And with CV, you can recognise objects because you have a wider color gamut.

If you go at the level of front facing LIDAR applications for adaptive cruise control, like Audi's, you are looking at the following resolutions:

You could claim that you'd need LIDAR and CV to have a complete picture, except CV and LIDAR have so much different results, so you're just working to find a way to have both stacks recognise the same thing. And what will you do if both the LIDAR and CV stack don't recognise the same thing? Who will you trust?