Was listening to Karpathys most recent talk:

He starts at 3:09.

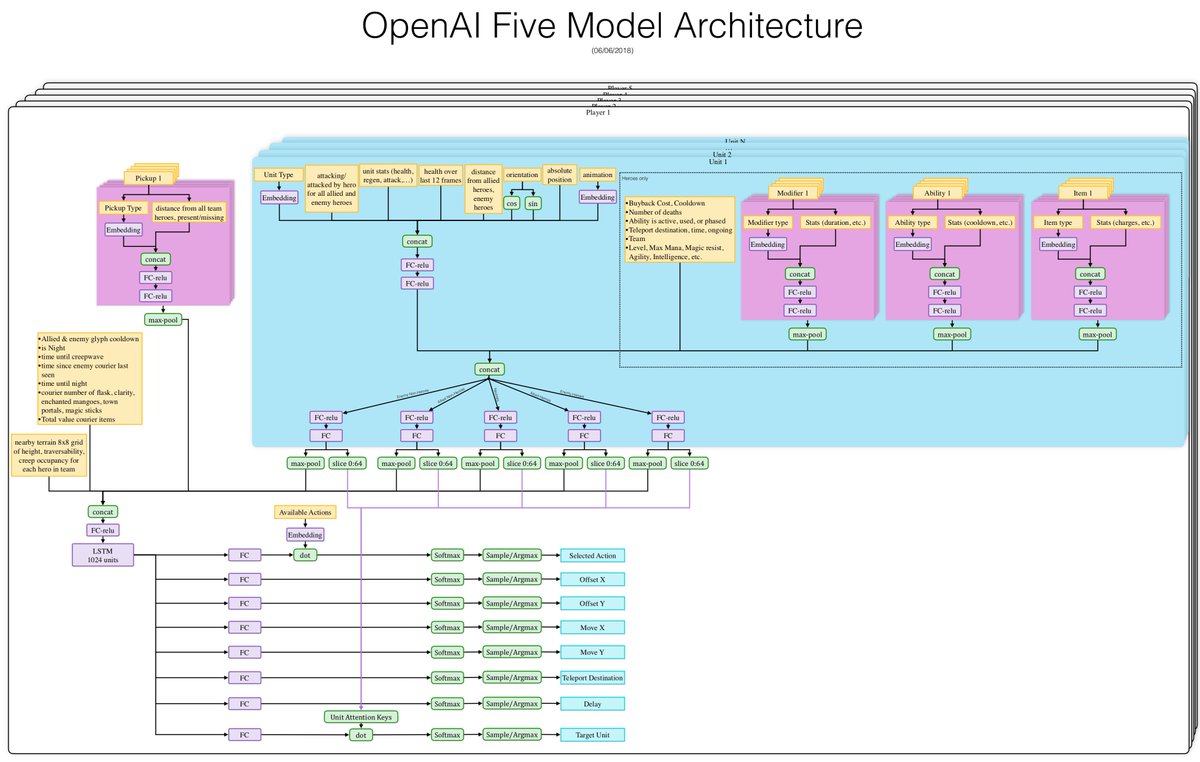

At 11:45 and forward he says that he believes in "Building a single model". I assume that the bigger FSD NN that we have seen might is the single model he talks about. And I assume this is some common layers and then a lot of different layers for the different tasks. Something like A->(B,C,D,E->(F,G->H))) or something like this:

Thanks for sharing that - really interesting. AK's talks are one of the best windows we have into the technical details of AP right now. He seems to intentionally not talk about Tesla in particular but the examples he provides from his experience there provide us with some insight nonetheless.

Apologies to anyone for whom the following is obvious - I'm going to provide a little explanation of the talk for the use of people who aren't very familiar with NNs. I've received quite a bit of feedback from them that such explanations are appreciated so I'm going to try to translate some of this stuff for their use.

AK talk translation:

Talk is for audience of people who work with NNs all the time and think in NN jargon. Am translating the overall points of it here:

Of course AK is an NN guy but recently he’s been promoting this idea that NNs are just one example of what he calls SW2.0 (software 2.0). So here he talks about SW2.0 but you can understand it right now as being mainly talking about making NNs for use in real products.

’SW 2.0’ is a new and very different method of making computer programs. Instead of writing a very precise and detailed recipe for the steps you want the program to perform you instead create a detailed description of what the program’s output should be. The SW2.0 process will produce a system that will then ‘learn’ / ‘discover’ / ‘search’ for a program that has the desired behavior.

Side note from jimmy_d: I say learn/discover/search because people in the field tend to use these terms interchangeably even though the plain english meaning is quite different. The process of training an NN is similar at a high level to ‘learning’ but at a low level the detailed algorithm uses a search method called gradient descent, so people in the field often talk about searching.

To do software 2.0 development you have to also do a lot of regular software development because the code that ‘searches’ is a regular software 1.0 program and there is also a lot of software 1.0 code used to create the very detailed behavior description. That means you have to write and maintain a lot of regular software which you use as part of the SW 2.0 (neural network) development process.

jimmy_d note: that ‘description’ is frequently in the form of a large database of particular behaviors you want from the SW2 program (or NN, in this case). For instance, your database could have a lot of examples of ‘in situation Y the correct output is X’. In learning parlance we call that the training set, but in Karpathy’s SW2 terminology he thinks of it as the software specification.

AK: Tesla uses a lot of NNs in AP

AK: Want to take best practices from regular code development and use them for SW2 code. For example, in SW1 test driven development you write the tests first then write your SW1 code to pass the tests.

AK then goes on to talk about various lessons from SW1 development and how there are good parallels in SW2 development. It’s really very interesting if you’re in the business.

So what do we learn about AP from this talk? Mainly we learn that Tesla’s AP development effort is very heavily invested in NNs to the point that they are pioneering a novel development methodology and a lot of new tools in order to maximally leverage the traits of NNs for the benefit of AP. We learn that they are using a production system that is heavily focused on version control, data management, and automated regression testing. AK also implies that a certain amount of automated deployment is going on.