The end game is that, in addition of the CPU/GPU/Flash/RAM silicon we have in every computing device, there will be additional silicon for AI inference (i.e. using the neural networks). That will be TPU's, not GPU's. There will be lots of different TPU's, each one matched to the intended use. E.g. the Tesla Autopilot cpu, or the Apple m1 series 16-core Neural Engine. If you are a software developer (aka neural network consumer), you're probably going to use an abstraction layer that can interact with all these different devices without hardcoding for one of them (so that you don't depend on Nvidia CUDA). I'm currently using ONNX (

ONNX | Home). Nvidia is probably not going to be dominant here because:

- SOC builders like Intel, AMD, Apple will include (actually they already have done that to some degree) that functionality into their SOC.

-If it's not built into a SOC, there is still the possibility to use a dedicated TPU. Google sells one cheaply (

Products | Coral), and there are others, and probably an order of magnitude more under development.

NVidia sells it's own SOC ARM-based product in this market: Jetson, Xavier

NVIDIA Embedded Systems for Next-Gen Autonomous Machines

TPU's give more bang for the buck than GPU's:

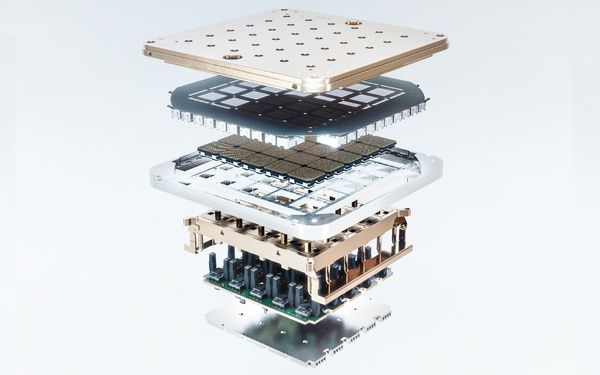

Then there is the training part of AI. This requires massive data centers, massive amounts of data, massive amounts of compute. For the moment Nvidia is dominant here, but they charge so much for their H100 TPUs

NVIDIA H100 Tensor Core GPU that their clients will all look for cheaper alternatives. Tesla even built their own system in about 1 or 2 years, called Dojo. It's difficult to imagine that Nvidia will remain without competition here for a long time.