Nvidia has announced a new automotive FSD chip, "Orin":

They claim 200 TOPS performance in a similar power envelope as Tesla's HW3 chip, which top line performance figure is higher than Tesla's FSD chip with 140 TOPS, but there's a number of key differences:

- Nvidia bases their 200 TOPS performance not on a large real-life FSD neural network, but on a usual INT8 benchmark that likely fully fits into their cache.

- Tesla has on-board SRAM which is directly addressable, i.e. there's no RAM traffic during inference calculations of loaded networks.

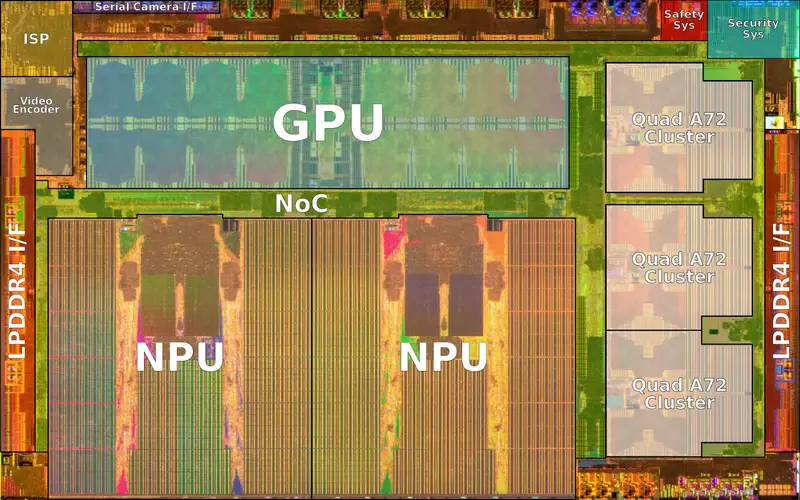

- Nvidia's new chip on the other hand still has visible cache structures on the CPU die:

Note that horizontal rectangular area in the middle-left area, that looks like a shared CPU cache to me. (But I could be misreading the die.)

Tesla has a very different chip design, which visibly differs from Nvidia's:

Note how the on-die SRAM areas (areas with vertical striping) are next to the NPUs, feeding a hierarchy of functional units.

I.e. I believe even Nvidia's latest chip doesn't have even

close to the real-world NN inference computing performance of Tesla's HW3 chip: Tesla's chip can load very big neural networks and do one inference calculation per cycle. Nvidia's chip is a traditional design and has to fall back to DRAM for large networks and has caching overhead.

Also, much of Nvidia's speedup is probably from the 7nm process they are using, while Tesla's HW3 chip is using a 14nm fab process.

The performance advantage from a process shrink is almost quadratic for simplified designs like Tesla's NN chip - i.e. Tesla's HW3 chip, when shrunk to a 10 nm or 7 nm process, would likely have real-world NN inference computing performance well beyond Nvidia's benchmark-only 200 TOPS.

TL;DR: Tesla's HW3 advantage is IMO not endangered by Nvidia's Orin.