I gotta say that Figure's demo and technical explanation is well, well beyond what Tesla has shown us. Indeed, Tesla might even be going down the wrong path in their Robot AI development. Figure is able to do what it does WITHOUT Tesla's vaunted vision system. Instead it has leveraged OpenAI's vision system.

It also does NOT use teleoperation supposedly for training, at least not anymore.

Meanwhile the last demo we saw from Tesla was teleop.

The last demo from Tesla was not about the software, it was about the hardware. It was to validate a design, that the hardware was enough for the task to fold laundry. Then they can do whatever they want to get the software to do it.

I think you guys are reading this wrongly. The demo is very cool, figure is doing great progress. Some of it looks nice, but is not that unique, like the conversation etc which is similar to groq(with a q not k).

The hierarchical planning part is not to different than what we have seen before, but a lot smoother. I wonder how they got there, if they used some form of reinforcement learning either in simulation or in the real world.

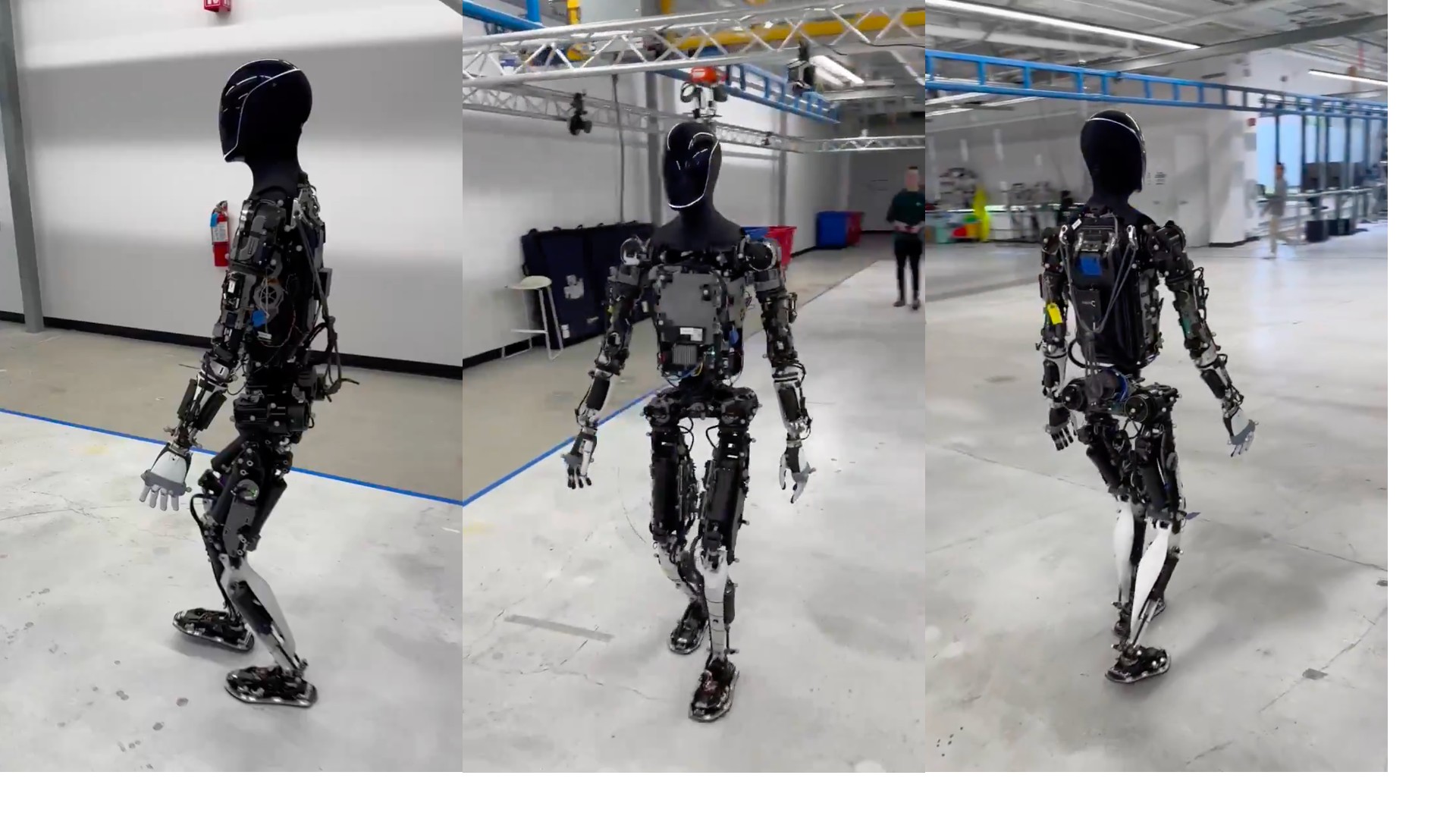

The hardware is nice, but it's a generation behind Tesla and I doubt that they have done as a great job of making it ready for mass production and crash testing.

Gathering data from 3rd person perspective rather than 1st person is cool, but don't think it's that big of difference and not too much data is wasted by gathering a lot of 1st person perspective as this will likely be very useful also. And Elon has been thinking about this for a long time, they want to be able to leverage the Tesla car dataset to see how people do tasks, ie the car has seen a lot of road side construction work...

In all, I don't think this invalidates Tesla's approach. Very little of Tesla's work is useless. Figure are impressive and OpenAI seems to help them giving Tesla a good contender for the software. And their hardware is very good for a small startup, but it's still behind Tesla.