Welcome to Tesla Motors Club

Discuss Tesla's Model S, Model 3, Model X, Model Y, Cybertruck, Roadster and More.

Register

Install the app

How to install the app on iOS

You can install our site as a web app on your iOS device by utilizing the Add to Home Screen feature in Safari. Please see this thread for more details on this.

Note: This feature may not be available in some browsers.

-

Want to remove ads? Register an account and login to see fewer ads, and become a Supporting Member to remove almost all ads.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Tesla, TSLA & the Investment World: the Perpetual Investors' Roundtable

- Thread starter AudubonB

- Start date

Ok good point, I'm leaning more towards your interpretation.This is a convention center loop. Three stops at different convention center buildings. No luggage.

StealthP3D

Well-Known Member

Where would you put comma.ai:s openpilot in this race?

I have it in my pre-ap model S, and it's surprisingly good. Beats ap1 hands down.

Openpilot is like Mike Tyson without a personal trainer or a gym to train at and without a promoter to land him the big fights.

Tslynk67

Well-Known Member

They plan on pushing this down to 1500 today. I don't know why but it appears to be the plan. I have cash standing buy.

It's still broadly following the index, however I think someone jumped on this dip to hammer it down just that little bit more.

Standard tactic of shorty & co.

Edit: see it drops like crazy, but bounces harder too, so mostly just $TSLA being $TSLA, I think

Last edited:

StealthP3D

Well-Known Member

Nasdaq pooping, TSLA pooping.

No matter. It's all just noise until we have more data points to go on. And I hear the Pony Express is due to arrive soon!

It doesn't matter why they buy and hold, only that the buy and hold.It's unearned. They are not specifically buying Tesla because of anything Tesla is doing. Rather they are simply saving for retirement. Tesla is becoming a generic stock, held by many for no better reason than it is included in a major index.

Ok good point, I'm leaning more towards your interpretation.

To be fair, Boring's other projects will likely have purpose built vehicles. Boring has submitted proposals for other tunnel systems which would require 12 or 16 person pods (presumably built by Tesla). But AFAIK Boring hasn't won any of these contracts yet. Again AFAIK, The Vegas convention center loop is the only contracted project so far. Note there is negotiation underway to extend this loop to the airport and the entire strip - at that point, luggage comes into play, etc.

I gotta say, picking between all of Elon's companies to invest in, Boring would be a very attractive investment indeed.

Edit: Just to give you perspective. Boring won this three person station contract with a $55M bid. A giant German company known for ski lifts among many other things, put in a bid for $500M. That's what you call disruption.

lafrisbee

Active Member

Old neural network architecture is labelling one frame(2D) from a single camera and to train a neural network on the last two frames(2.5D(2D(x,y)+0.5D(~time))) to predictict where objects are in the image(2D) then do some neural network magic to get it into bird eye view(~2.5D).

New neural network architecture will take a video feed, generate a point cloud of all the static objects(3D) and of the moving objects(4D) and train a neural network to predict where static objects are(3D) and dynamic objects will be(4D) based on the current image frames and recurrent information from the neural network at previous timestep.

I think he is referring to how the neural network internally will be representing the information. If it needs to think in 4D it will start to think in 4D. In order to predict where a moving car will be in 4D space, which is needed in order to predict the depth of the next frame for example, the neural network will find an internal representation in vector form for this. See this video at 22:47

Here is a video about a similar technique:

OK?Old neural network architecture is labelling one frame(2D) from a single camera and to train a neural network on the last two frames(2.5D(2D(x,y)+0.5D(~time))) to predictict where objects are in the image(2D) then do some neural network magic to get it into bird eye view(~2.5D).

New neural network architecture will take a video feed, generate a point cloud of all the static objects(3D) and of the moving objects(4D) and train a neural network to predict where static objects are(3D) and dynamic objects will be(4D) based on the current image frames and recurrent information from the neural network at previous timestep.

I think he is referring to how the neural network internally will be representing the information. If it needs to think in 4D it will start to think in 4D. In order to predict where a moving car will be in 4D space, which is needed in order to predict the depth of the next frame for example, the neural network will find an internal representation in vector form for this. See this video at 22:47

Here is a video about a similar technique:

When the guy in Northhampton UK gave me a good understanding of this technical geek stuff I could handle it..."Some Bloke in a Pub wearing tweed in the Summer with a border collie at his feet musing over The Financial Times while the snaggle-toothed waitress slides a beef and kidney pie to 'im."

But YOU!!!

Come on!! this is too much...

"So now some Vikinged-out norseman with Swedish fur-lined bikini models laying on craggy rocks at his feet, gazing out over a mirror-finished fjord, while sipping Vodka and looking at the Sun's reflection at friggin midnight is explaining to me how "the neural network internally will be representing the information"...

The internet and Tesla is really making the world a small place.

UkNorthampton

TSLA - 12+ startups in 1

I found that part interesting. Why would they operate multiple types of vehicles? The only answer I have is that they believe they are truly production constrained, which would explain the lack of the Y in there which would be a better choice overall. I'm guessing they plan to just fill in the fleet whenever they have excess inventory of 3s, Xs, S etc.

- Agree with you on using a mix of what's available/surplus.

- Form of selling the various products via test drives "We tried the X last trip but we're more likely to buy the ... let's ride that one next time". Especially to people who would otherwise NEVER get exposure.

- Autonomous data / FSD / different placement of cameras checking

- Recycle leased vehicles when returned?

- SEXY + Cyber and Roadster! Introduce each model - squeeze a Semi down there?

- Kids are intro different models. So are adults... I was really surprised to see at a Tesla 'Shop' in Milton Keynes Intu that most of the interest / selfies were from first-generation Indian families / groups of friends and were for Model S (not sure there was an X there). This was just after Model 3 release and I was expecting loads to be looking at the 3. I was there for a while asking questions about delivery etc and saw quite a lot of activity along this theme. Other visits were similar. I never considered the S myself. Too big for my uses and for many roads in UK (in my opinion/preference).

Watching the SP go down (sure just a tiny bit..but still) is funny. Right before the biggest potential catalyst if a long time.

I mean who would be selling?!

I mean who would be selling?!

Sudre

Active Member

Looking at the trend and volume it appears this is the same exact hands that were pushing down yesterday afternoon. It is an exact fingerprint. It worked yesterday so they are starting earlier today. I wonder if they have the power to sustain it all day.

FreqFlyer

Active Member

What happened to the MPVs?

Or ARK invest??

Way more people can invest in the S&P500 in their retirement accounts than can invest in ARK ETFs in their retirement accounts.

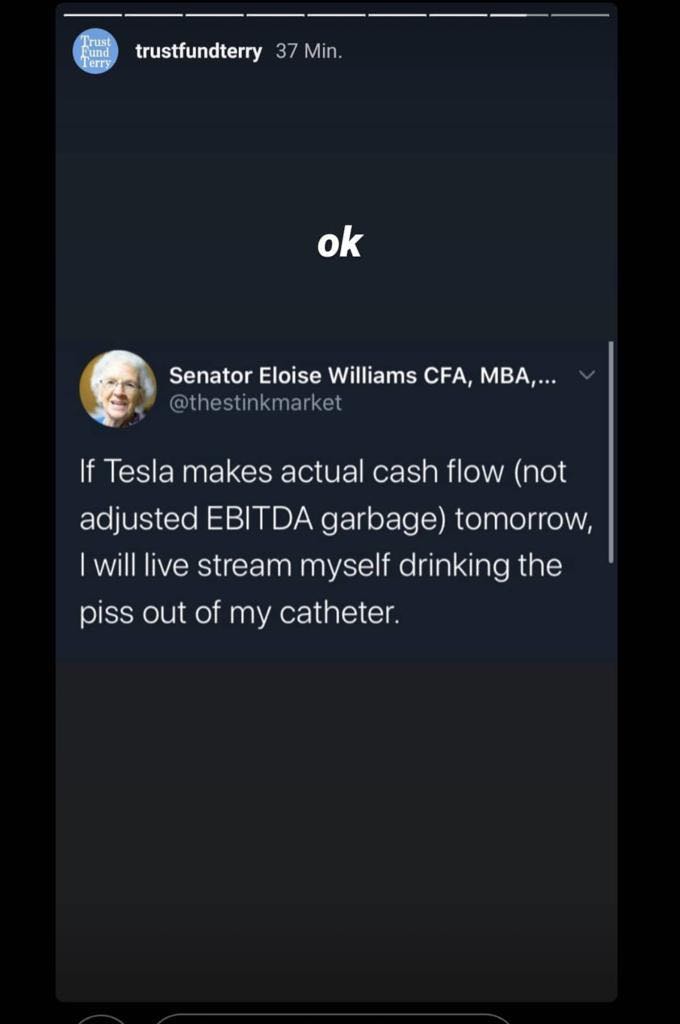

Who will win? This person, or McAfee? ‘Worthless Coin’ — McAfee Says He Never Believed Bitcoin Would Hit $1M

Last edited:

Tslynk67

Well-Known Member

Watching the SP go down (sure just a tiny bit..but still) is funny. Right before the biggest potential catalyst if a long time.

I mean who would be selling?!

I think it's the same couple of thousand shares bouncing back and forth between some feuding algobots on rival servers...

Todd Burch

14-Year Member

NASDAQ wiping/flushing, TSLA now wiping/flushing as well. Hopefully they then both wash their hands.

Thumper

Active Member

I like the idea of gradual inclusion in the S&P. Better to have sustained buying pressure over time than a sudden spike and drop back.

What happened to the MPVs?

Plans changed. Not needed for this project. Will be needed for other projects if Boring wins the bids. Same thing with the elevator concept, this project doesn’t use them, just uses ramps.

Thumper

Active Member

Riding the Tesloop in Vegas will become a must-do activity for every visitor to the city. Fabulous exposure!

StealthP3D

Well-Known Member

@Curt Renz

Reddit user __TSLA__, whom I believe is @Fact Checking , would like more context about your recent quote from Todd Rosenbluth.

It's not entirely clear from the quote whether Todd is suggesting that index funds usually get a ~7 days notice ahead of inclusion separate from the official public announcement, or whether he is referring to the public announcement itself.

I think it's pretty clear that this is not the type of thing in which index funds would be granted an inside scoop. It has to be released to all investors simultaneously. Index funds are simply investors.

Similar threads

- Locked

- Replies

- 0

- Views

- 4K

- Locked

- Replies

- 0

- Views

- 6K

- Locked

- Replies

- 11

- Views

- 10K

- Replies

- 6

- Views

- 5K

- Locked

- Poll

- Replies

- 1

- Views

- 12K