This is absolutely the case.

Recently there was a terrible tragedy in Bellevue, WA. Someone tried to avoid getting hit by another car - swerved and hit a baby in a stroller on the sidewalk killing the baby instantly.

Whenever I read anything about "ethical" choices and whether AI can be as good as humans, I'm reminded of this - and I hope AI is better.

The CAR will probably see the baby ( unless it's obscured by something ) and the basic "hit a car, not a pedestrian" is of course a basic concept for everyone, i don't think anyone will have something to say about this.

A person will probably not be able to see the baby and calculate that is better to hit a car, it has no time for this, else i think everyone will choose to hit the car, even if they don't have an ethic, killing a person is surely more costly ( jail / payment / etc ) than hit a car.

The etic problem is when you have to choose from 2 type of "problem" with the same class.

The choose are simple:

- Class A: Hit nothing

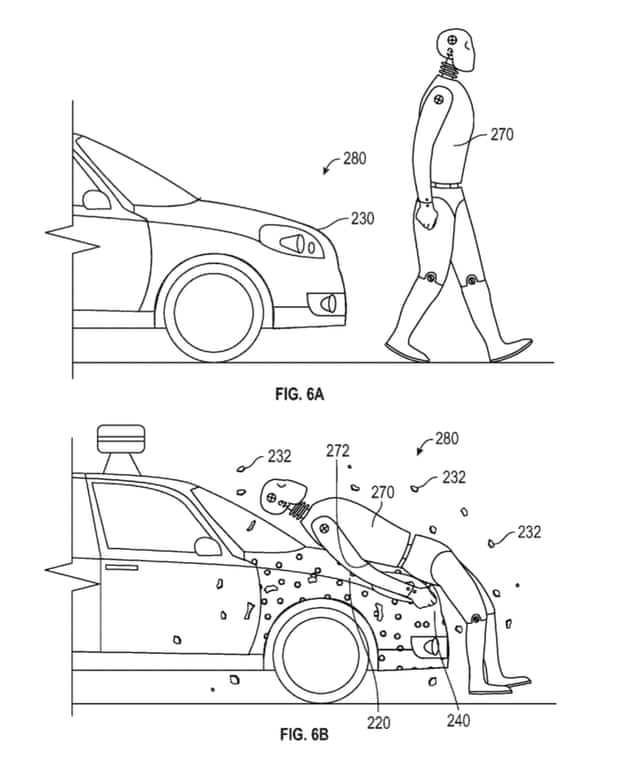

- Class B: Hit animated object

- Class C: Hit car

- Class D: Hit pedestrian

When you have to choose from class B and class A, surely, class A

B vs D? B, C vs B? B etc...

The problem is C vs C, or D vs D, but in this case an human surely can't choose, it has no time, a computer on the other hand, probably can't choose from an element from the same class and will probably choose the less risky ( more breaking space or similar ), but as said, it's not an ethical decision, it's just tath you can't make a decision