I thought I would write a more detailed summary for people who maybe did not get a chance to watch the entire video:

Introduction

Waymo is only company in the world running a 24/7 commercial, fully autonomous ride-hailing service (since 2020)

10k+ rides/week (public riders, not including employees)

2M+ "rider-only" miles (no safety driver)

10M+ autonomous miles (with safety driver)

10B+ simulation miles

Tested in all weather conditions

Progress

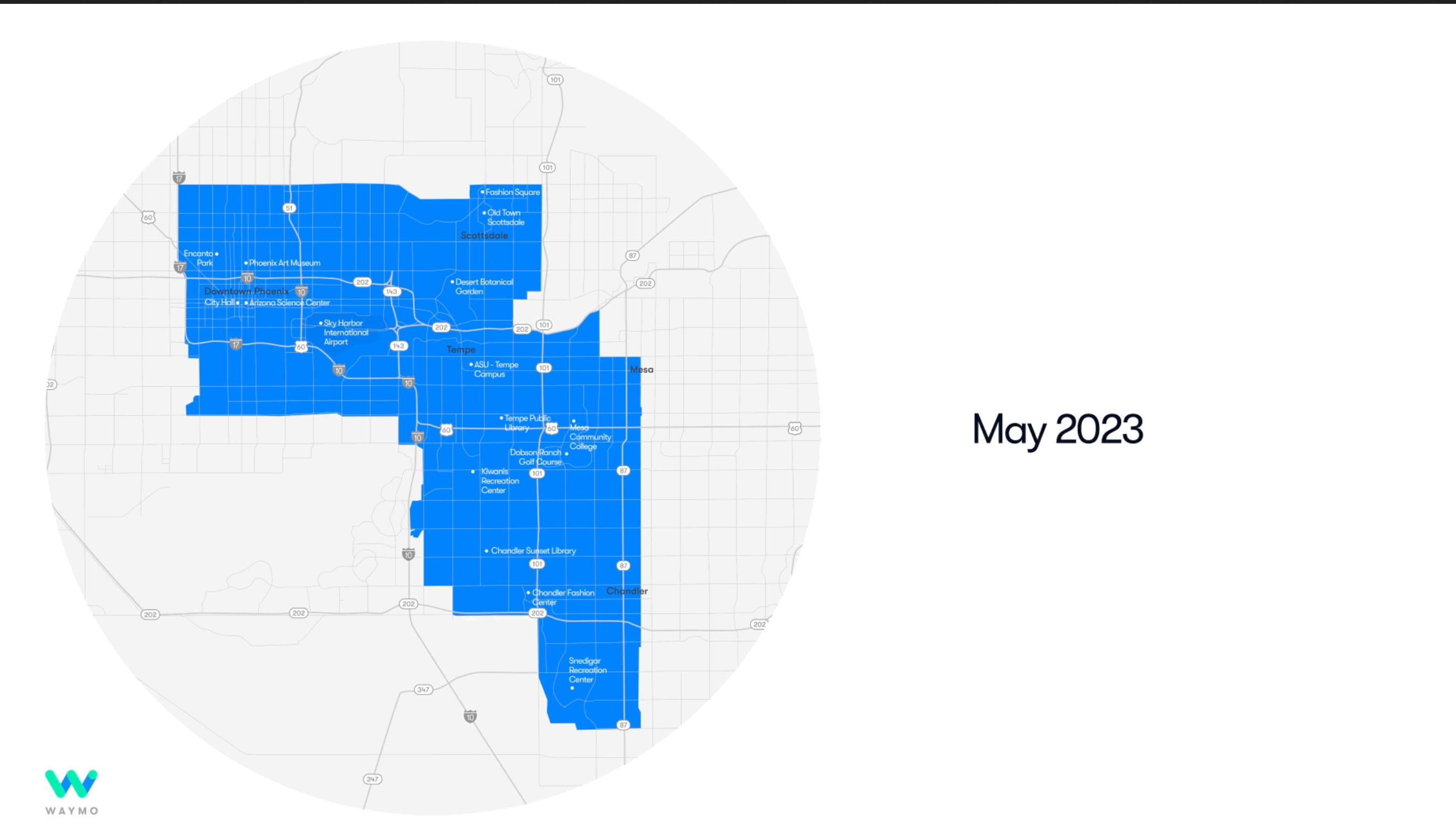

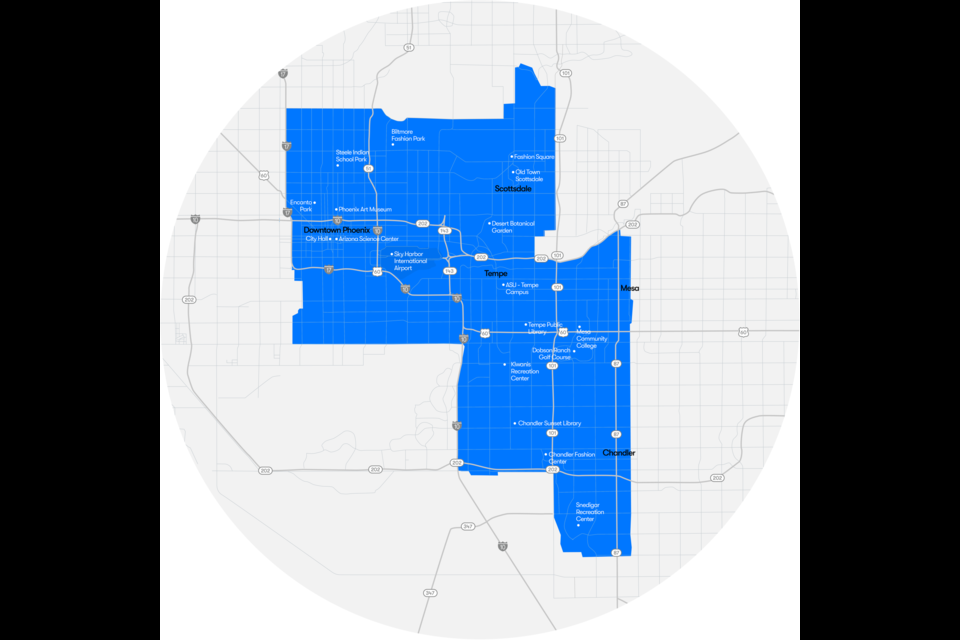

24/7, fully autonomous (no safety driver) ride-hailing service in 47 sq mi in SF and 180 sq mi in metro Phoenix

Intent to scale 10x by next summer

Currently testing with no safety driver in LA. LA will be next fully autonomous ride-hailing service. LA ODD is "in between" SF and Phoenix so it will allow pretty rapid deployment.

Over past year, Waymo improved performance in adverse weather (rain, fog), improved capability to navigate construction areas including responding to temporary signs by construction workers, improved back-to-back lane changes in dense traffic.

Safety Data

Waymo published safety data on first 1M driverless miles. No injuries. 55% of incidents were from human drivers hitting a stationary Waymo. No incidents at intersections. No incidents with vulnerable road users (pedestrians etc). Only 2 collisions that meet NHTSA reporting. 18 minor contact events. 10% of all incidents happened at night.

Core Architecture & Long Tail

Waymo is making continuous improvements to core architecture and taking systematic approach to solving long tail. The better the core architecture, the smaller the "long tail" becomes. Waymo approach is built on foundation of data, evaluation, machine learning infrastructure and simulation.

Core Architecture

Waymo is focused on improving core architecture in two areas: motion prediction of many objects and lidar 3D object detection at long range.

Motion Prediction

Waymo developed improved multi-agent motion prediction using diffusion. NN takes in scene input (road, cars, pedestrians, traffic lights, etc) and generates lots of random possible paths of objects, It then puts that through a denoiser, which eliminates wrong paths and outputs clean single paths for all objects. Advantage that it does not require pre-defined "anchors".

Lidar 3D Object Detection at Long Range

Waymo lidar has 300m+ range. At longer ranges, lidar point cloud becomes sparser so object detection becomes more difficult. To address this problem, Waymo developed sparse window transformer NN that can do more efficient 3D object detection with fewer points.

Long Tail

To solve the long tail, Waymo is focused on two areas: identifying rare examples for training set and improving unsupervised perception and prediction.

Rare example mining

Objects can be easy or hard to detect (ie occluded object). Objects can also be common or rare. Objects difficult to detect are not necessarily rare. Identifying these rare examples allows you to add them to your training set which will help your autonomous driving better handle those edge cases. Waymo proposes model-centric and data-centric approach. Model-centric defines rare as uncertain objects minus difficult objects. Data-centric can estimate the rareness of an object based on the probability density of a data sample.

Results: using only 13% labeled data plus rare example mining, Waymo achieved 30.97% better detection of rare large vehicles than same 13% labeled data without rare example mining and only 5% worse than fully supervised training (100% labeled data).

Motion inspired unsupervised perception and prediction

The idea is to use unsupervised learning to auto-label new objects based on motion. You can then do object detection and prediction with these new auto-labels objects. This will allow your autonomous vehicle to handle new objects that it has not been trained on. Waymo has NN that takes raw lidar sequences and estimates motion and then auto labels objects. Waymo uses these auto labels to train their 3D object detector and trajectory predictor.

Example: A person with a stroller but only the person is labeled. The unsupervised 3D object detection auto-labels both the person and the stroller and predicts motion. Other examples: movers pushing a garbage bin or a person carrying a large box where you can only see their legs, the system correctly auto-labels objects.

Results: Fully supervised detector cannot generalize to new categories. Unsupervised auto labels on unknown categories improves generalization.

Conclusion

Achieving excellent product requires a holistic approach. It's not just about improving software but also hardware. For example: making sure hardware is self-cleaning, resistant to damage.

Example: Adverse weather can impact AV system in many different ways. Wet roads can create reflections that can confuse cameras, condensation like fog, mist and rain can alter sensor data, droplets, dirt or ice can foul surface of sensors, wet and icy road can be slippery, reducing friction.

Waymo can drive in "almost all fog" and "most rain conditions".

Current weather stations lack precision and specificity to help autonomous vehicles drive. Waymo leverages the sensors on each vehicle to turn each vehicle into a "mobile weather station". Waymo crowdsources millions of data points from the fleet to build a first of its kind real-time weather map to help their ride-hailing operations.

Waymo can automatically clean sensors when sensors detect dirt, condensation etc...

Waymo testing sensors to make sure they can withstand small impacts (for example: not uncommon at highway speeds to have bugs or small pebbles hit the sensors). Waymo needs to be able to continue to drive or be able to pull over when one or more sensors are degraded.

cc

@Bladerskb I hope I accurately represented the info. The technical machine learning parts were a bit over my head.