I was gonna stop posting since it seems futile since guys like

@JeffK and

@Reciprocity say Tesla is light years ahead.

plus we are at the point where discussion is pointless, end of 2017 is months away. Tesla either has FSD better than human or they don't.

I do however like this thread, it eliminates tesla only fans who don't care anything about facts.

Facts like:

A) Highway is a lot easier than Suburban and Urban roads. Therefore Cross-country driving is useless because less than 5 miles are urban streets no matter what ever parking lot to parking lot you pick.

Here is a glimpse of what Highway driving looks vs. Urban streets.

One of the things we do subconsciously is that we predict.

We predict that a guy in his car looking down at his phone doesn't see us and that we should take evasive action.

We predict that the car in the lane next to us is blocked by the car in front of its lane and that we need to slow down and let them into our own lane.

We predict that the kid running across the street is trying to catch the bus parking infront of us in the next lane so we should watch out and slow down in anticipation of him.

This is in comparison to Highway where you have to make little or no prediction at all because you are simply driving forward in a straight lane.

Here's what predicting where every car, motorcycle, object, pedestrian is going looks like.

The idea that you record steering angle, throttle and brake status and camera feed from a fleet of 100,000 cars and in no time you will have FSD is preposterous.

Researchers and companies all over use simulators and even games like GTA V. We can today get 1 million miles of data from GTA daily and even with all that data you can't make a car FSD. Neural Networks are regression algorithms. They are great at generalizing when you have 500,000 examples of human labeled and collated data but bad at unsupervised learning in noisy data. That is why specific networks are used.

Simulators are available today and the fairytale fleet learning doesn't work.

The CNN at work there is similar to the one NVIDIA uses. But there are problems with over-fitting and under-fitting of data. You ask how hard is the problem? For example NVIDIA took 7,776,000 million pictures mapped to steering, throttle and brake data that were Collated.

Here's how the all most 8 million pictures were humanly Collated. For example they only took picture of the car driving as perfect as possible in lane and removed every picture other picture like pulling out the driveway, entering and exiting lane is excluded. The reason car can drive is because its seen millions of collated pictures of it driving inside a lane.

"To train a CNN to do lane following we only select data where the driver was staying in a lane and discard the rest."

But that's not enough, the images have to be even more correlated unless the car will be over fitted to drive straight in curves/corners. So more discarding Collation happens.

"To remove a bias towards driving straight the training data includes a higher proportion of frames that represent road curves."

But even with just that, if the car were to steer too much to the right and got off lane it would crash because it wouldn't know how to recover. So we do more Collated.

"After selecting the final set of frames we augment the data by adding artificial shifts and rotations to teach the network how to recover from a poor position or orientation."

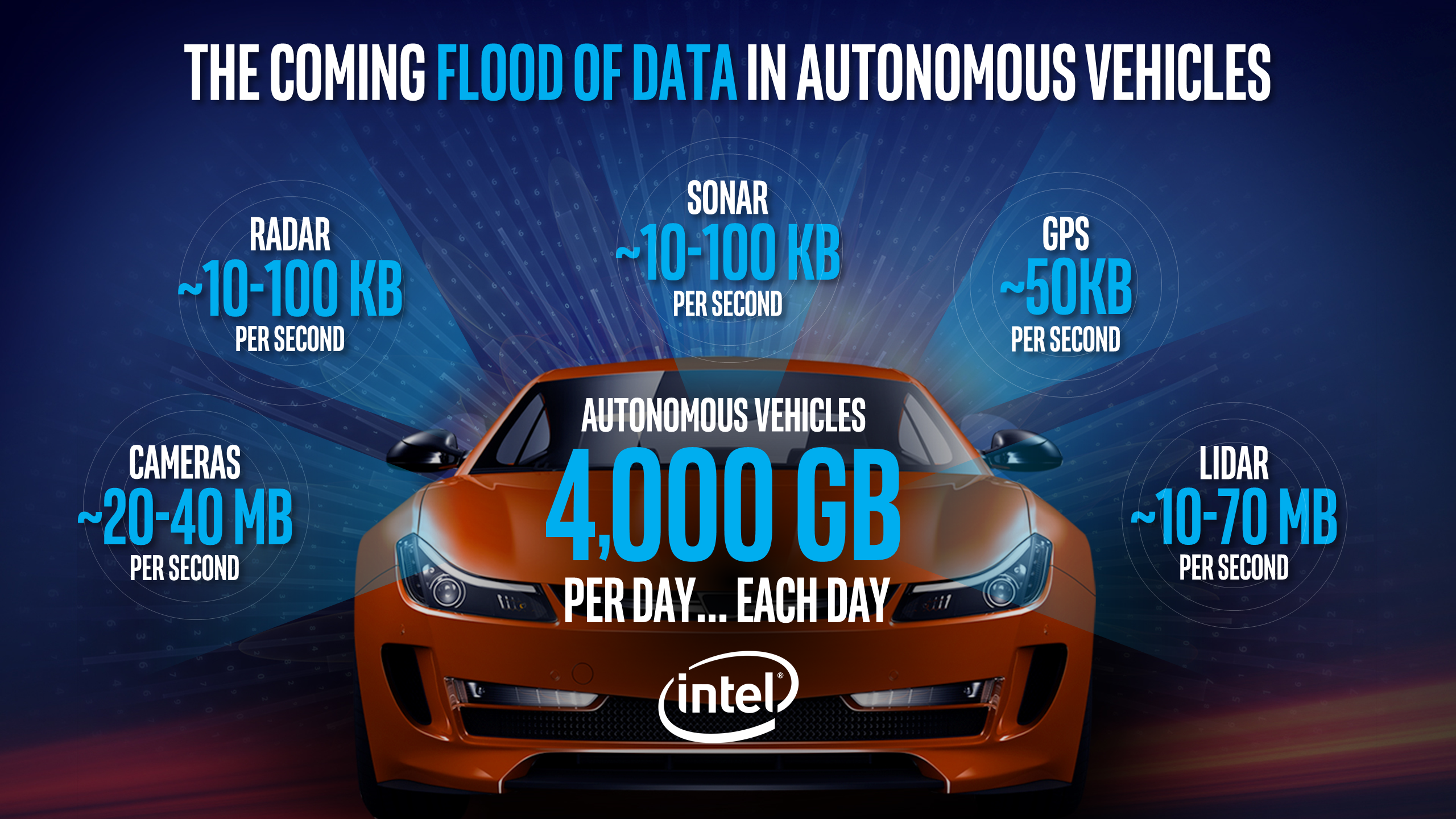

This is all just to create a Level 2 capable car. So the idea you just beam up Terabytes of data per day up to Tesla HQ and you have FSD is laughable. This is why as Nvidia say "When self-driving cars go into production, many different AI neural networks, as well as more traditional technologies, will operate the vehicle. Besides PilotNet, which controls steering, cars will have networks trained and focused on specific tasks like pedestrian detection, lane detection, sign reading, collision avoidance and many more."

(obviously i'm a software engineer with experience in machine learning and computer vision)