Fellow radar person!

I think you mixed terms here. Synthetic Apperature Radar (SAR) uses post processing of a set of radar data taken along a path to create a virtual antenna whose width is the length of the path taken in the data set. This would only increase resolution tangential to the vehicle path.

Phased arrays like on Starlink use multiple elements to steer a beam whose beamwidth is roughly the size of the array.

The Tesla Phoenix radar uses a phased arrays combined with multiple transmit and recieve antennas to create a denser virtual array phased array. They seem to be using TI chips and TI has great app notes/ background tutorials for anyone interested.

Good enough; yeah, I worked on RADARs as a techie back in the (serious) long-ago days, and took a RADAR course in grad school, more or less as a fun, easy-A elective, but really haven't touched any of it since then.

Still: Synthetic Aperture Radar or not, or phased array or not: Wherever that beam gets pointed, unless said beam is exceedingly narrow, there's going to be clutter picked up in the return, right along with desired reflections from other cars, stopped or not. Which, and we have to be clear here, the RADAR has to

find, as in, sweep around and look for.

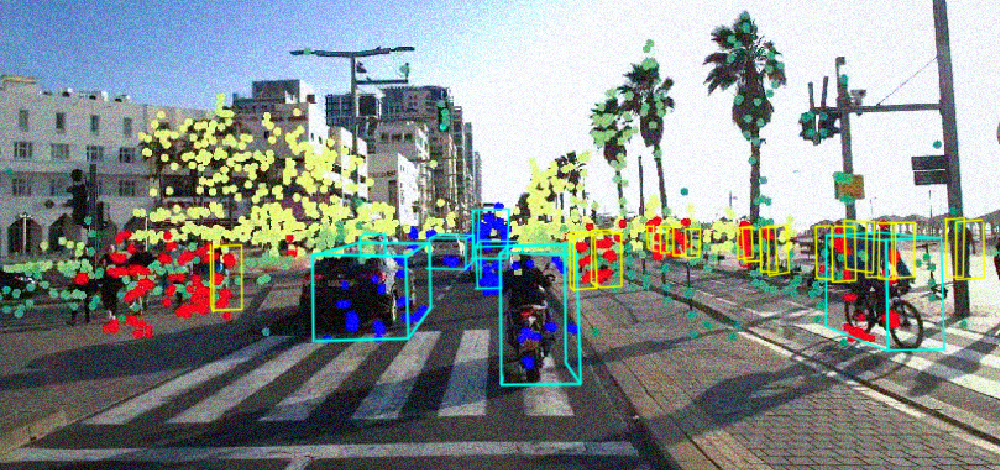

So, for an argument, say we have infinite time. We take this steerable RADAR antenna and sweep it back and forth, multiple times, so we can get the vertical as well as the horizontal (we got hills, attitude of the car, etc.). Store all the reflected pulses in an array of data, then do, pretty much, image recognition on the fuzzy results. Then do it

again, and again, repetitively, and, from those multiple images, do shape recognition (stopped car, right? It's got a shape..), fixed item recognition, that whole occupancy network stuff Tesla goes on about, and, from that, make driving decisions.

Never mind the processing time of the image thus constructed; we're doing that already with vision. It's the

acquisition time that gives me serious pause. While what we're looking for isn't exactly pixels in the true image sense, it sure looks like that, at the maximum resolution of this steerable antenna. So, lots and lots and lots of sweeps.. that

adds up. In the end, we'd like to match the 40-50 image captures per second that a vision camera can do; I fear that we're talking two or three captures per second.. and that,

if true (and I'm a tyro at the modern stuff) is just Too Darned Slow.

There's lots more. Many of these kinds of antennas can be rigged to have multiple beams at once; but then the power per beam goes down, raising questions of, "is it all going to fit?" come to mind.

Admittedly, modern ICs can have zillions of transistors on them leading to better, faster processing capabilities that doesn't break the power consumption bank. Heck, I've designed some custom multi-million gate ICs before now; but even those suckers have limits.

Got a reference for somebody doing more than tracking a target, a bit better than, say, the systems that were emplaced in Teslas beck in 2018 or so? Because this idea, kinda an improved vision system that can see through fog

despite clutter, sounds like several orders of magnitude more complex, power hungry, and physically large. And if said "modern" system is using Doppler shift to get rid of the clutter at a distance, well, we're right back where we started from. Better resolution, maybe, but an inability to see stopped objects in the roadway.