Don’t drive drowsy and it should be a non-issue.It's not a claim. It's a question. I don't know how intrusive it is or will be some day. Right now I have $250. at stake plus more for wiring the house and the Tesla Wall Connector I installed last night. My only concern is that I might blow $73,000 on a car that won't drive. A reasonable concern I think. Now I understand no one knows the answer yet but what about Autopilot? Does it already rely on the interior camera or not?

Welcome to Tesla Motors Club

Discuss Tesla's Model S, Model 3, Model X, Model Y, Cybertruck, Roadster and More.

Register

Install the app

How to install the app on iOS

You can install our site as a web app on your iOS device by utilizing the Add to Home Screen feature in Safari. Please see this thread for more details on this.

Note: This feature may not be available in some browsers.

-

Want to remove ads? Register an account and login to see fewer ads, and become a Supporting Member to remove almost all ads.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

In car camera. Should I cancel my Tesla order?

- Thread starter 404 Not Found

- Start date

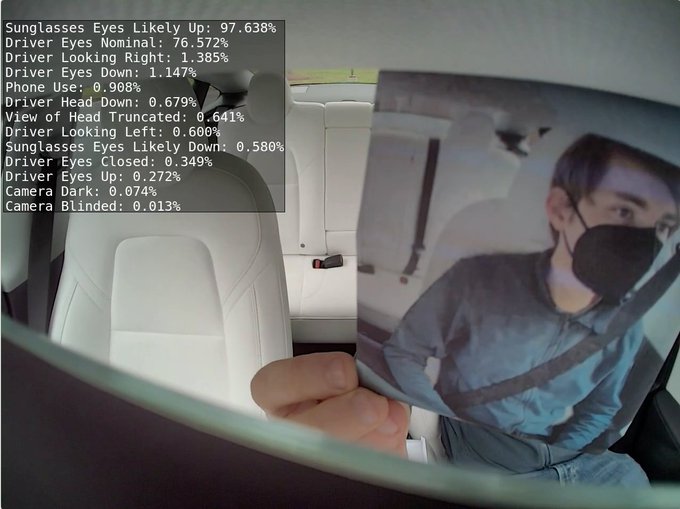

its $73,000 Canadian so its only like 50 centsHello everyone. I have a MY on order. The in car camera was already a little concerning but now, apparently Tesla wants to monitor your face at all times. Does this mean that if I look tired or the camera is covered, that the car will simply shut off? Maybe I should pick a different make or just keep my diesel truck? I really want the Tesla but $73,000 is a lot of money for a driveway ornament that won't move. Thanks.

Tesla To Improve Driver Monitoring and Track Drowsiness Even When Autopilot Is Off

Tesla is upping its game in driver monitoring with an impressive array of advanced features. This article delves into the specifics of these features, highlightwww.notateslaapp.com

ZenRockGarden

Active Member

Don’t drive drowsy and it should be a non-issue.

It's a non-issue regardless of what you look like or your state of rest because the camera is NOT USED to monitor ANYTHING except if the driver is looking at the road while using the Full Self Driving feature.

CyberGus

Not Just a Member

But a car that shuts off if you blink 5 times in a minute is useless.

You blink when you drive??

whisperingshad

Active Member

Probably will be the person buying a defeat device, take a nap and wreck, and still blame it on Tesla

CyberGus

Not Just a Member

I would think a moderator would perform due diligence and check for previous legitimate postings before suggesting someone may be a troll. I have owned more than 60 cars and have 5 right now. I'm looking to get rid of them all to go EV. I have a deposit on a new Tesla, the most expensive car for me ever. I paid to have my house wired for charging and purchased a Tesla Wall Connector which I myself wired to a Nema 14-50 plug and installed it on my house. Most trolls don't do this.I am not sure why you are doubling down on the "Car wont move" statements / questions in this thread. No where at all has it said ANYWHERE that the car would not move if it does not see your eyes. This is a claim / question being made only by you. Unless you have proof that this is the case, I am going to say, emphatically, that No where is this said it is the case, so the answer is, emphatically, NO YOU WILL NOT BE REQUIRED TO HAVE A CABIN CAMERA VIEW YOUR FACE TO DRIVE THE CAR NORMALLY, and intimating anything else is actually irresponsible / borderline trolling.

Now, As for Autopilot / FSD, that may require cabin camera, I dont know, but you said "Ornament in the driveway that wont move" which is extreme hyperbole, since neither autopilot nor FSD are required in any way, shape or form to drive the vehicle ("move").

Tesla monitors drivers for FSD and perhaps Autopilot and if you don't comply, they shut it off. That's fine. So does Chevrolet and Cadillac. No concern to me. BUT, Tesla reportedly is about to do the same to ALL driving. This is after I ordered. This means SOMETHING will happen if you cover your camera or look tired etc. Is this not a genuine concern? Those who wrote the articles seem to think so. The car might beep or it might not move, who knows. But something will happen, right?

CyberGus

Not Just a Member

I would think a moderator would perform due diligence and check for previous legitimate postings before suggesting someone may be a troll. I have owned more than 60 cars and have 5 right now. I'm looking to get rid of them all to go EV. I have a deposit on a new Tesla, the most expensive car for me ever. I paid to have my house wired for charging and purchased a Tesla Wall Connector which I myself wired to a Nema 14-50 plug and installed it on my house. Most trolls don't do this.

Tesla monitors drivers for FSD and perhaps Autopilot and if you don't comply, they shut it off. That's fine. So does Chevrolet and Cadillac. No concern to me. BUT, Tesla reportedly is about to do the same to ALL driving. This is after I ordered. This means SOMETHING will happen if you cover your camera or look tired etc. Is this not a genuine concern? Those who wrote the articles seem to think so. The car might beep or it might not move, who knows. But something will happen, right?

Hackers peeking into the code have seen this driver-monitoring activity. Tesla has shipped no code to revoke your driving privileges, nor have they announced any intention to do so.

Tesla monitors drivers for FSD and perhaps Autopilot and if you don't comply, they shut it off. That's fine. So does Chevrolet and Cadillac. No concern to me. BUT, Tesla reportedly is about to do the same to ALL driving. This is after I ordered. This means SOMETHING will happen if you cover your camera or look tired etc. Is this not a genuine concern? Those who wrote the articles seem to think so. The car might beep or it might not move, who knows. But something will happen, right

How many cars you have owned or not has no relevance in continuing to insinuate you will not be able to drive the vehicle at all without having the cabin camera on. What is likely to happen is not being able to activate FSD, and or Autopilot. Neither of those statements are what you asked or are insinuating.

I made the (heavy handed) statement I did, because it was pointed out to you that FSD and Autopilot are what the cabin camera is used for, and you started talking about "A car that shuts off if you blink 5 times is Useless", to go along with statements like "ornament in my driveway that wont move".

How many cars you have owned has nothing to do with how much hyperbole those statements contain.

You are making these hyperbolic claims, from reading an article about a hacker who has looked at source code. The hacker source is good as its a known tesla hacker. With that being said, not everything in source code gets implemented, nor is there ANY STATEMENT WHATSOEVER about needing a cabin camera to move the vehicle, as you keep trying to lean into saying with multiple posts in this thread.

Thats what I find irresponsible / borderline trollish. Not that you are buying a car, not even that you have questions about how this might work, its that you keep repeating this statement about "ornament in my driveway" and " car shutting off if you blink", which frankly come across as being alarmist for being alarmist sake.

BrianGragg

Member

Nothing to worry about. Buy this if you are concerned about it.

https://www.amazon.com/LUCKEASY-Webcam-coverfor-2017-2019-Privacy/dp/B07S5BFN6M

https://www.amazon.com/LUCKEASY-Webcam-coverfor-2017-2019-Privacy/dp/B07S5BFN6M

AleNYAle

Member

Tesla does what many other carmakers do, nagging you if you are doing something else while the car is using its assisted driving features. If you don't respond to the alerts it will stop the autopilot feature until you stop and start again, it won't stop the car from driving.I would think a moderator would perform due diligence and check for previous legitimate postings before suggesting someone may be a troll. I have owned more than 60 cars and have 5 right now. I'm looking to get rid of them all to go EV. I have a deposit on a new Tesla, the most expensive car for me ever. I paid to have my house wired for charging and purchased a Tesla Wall Connector which I myself wired to a Nema 14-50 plug and installed it on my house. Most trolls don't do this.

Tesla monitors drivers for FSD and perhaps Autopilot and if you don't comply, they shut it off. That's fine. So does Chevrolet and Cadillac. No concern to me. BUT, Tesla reportedly is about to do the same to ALL driving. This is after I ordered. This means SOMETHING will happen if you cover your camera or look tired etc. Is this not a genuine concern? Those who wrote the articles seem to think so. The car might beep or it might not move, who knows. But something will happen, right?

And now, reportedly, it might alert you or something similar if you start falling asleep (or keep looking away, etc) while driving regularly, which is obviously a safety feature.

No one ever said that the car will shut off or that it will stop driving other than you.

If you are so bothered that a car might alert you if you decide to do something else instead of looking at the road while driving, then maybe you should consider a driver or using an other form of transportation. Doesn't matter how many licenses or vehicles you have.

.. Tesla Wall Connector which I myself wired to a Nema 14-50 plug ...

The tesla wall connector is supposed to be hardwired

smogne41

Active Member

OP, I can't tell you if you should order the car or not, but your concerns are totally valid. Tesla absolutely has and well change how the car operates after you purchase it, some time in ways you absolutely do not want (see V11 UI "update" in 3/Y, Emergency Lane Departure Avoidance, radar deactivation, etc). My cabin cam is covered and will always remain covered. If in the future the car starts limiting what I can do with it (nothing yet since I don't have FSD), sounding alarms and complaining, or worse I absolutely will use a defeat device if possible or be forced to sell it. Non-negotiable.

I do deeply care about road safety, but I also care deeply about personal privacy and being in charge of tech I own. The Tesla is the ONLY piece of computing tech I own that I can not get 'root' on, and I regret that.

I do deeply care about road safety, but I also care deeply about personal privacy and being in charge of tech I own. The Tesla is the ONLY piece of computing tech I own that I can not get 'root' on, and I regret that.

i believe you can get root access on your carOP, I can't tell you if you should order the car or not, but your concerns are totally valid. Tesla absolutely has and well change how the car operates after you purchase it, some time in ways you absolutely do not want (see V11 UI "update" in 3/Y, Emergency Lane Departure Avoidance, radar deactivation, etc). My cabin cam is covered and will always remain covered. If in the future the car starts limiting what I can do with it (nothing yet since I don't have FSD), sounding alarms and complaining, or worse I absolutely will use a defeat device if possible or be forced to sell it. Non-negotiable.

I do deeply care about road safety, but I also care deeply about personal privacy and being in charge of tech I own. The Tesla is the ONLY piece of computing tech I own that I can not get 'root' on, and I regret that.

SabrToothSqrl

Active Member

I already know when I'm driving tired. I don't need the car to tell me things I already know.

It's not like I'm not going to drive where I need to go.

waste of time and effort. If FSD ever works, this will be pointless.

It's not like I'm not going to drive where I need to go.

waste of time and effort. If FSD ever works, this will be pointless.

AleNYAle

Member

Again, no one is saying the car won't drive, so why would you think you won't be able to go where you want to go?I already know when I'm driving tired. I don't need the car to tell me things I already know.

It's not like I'm not going to drive where I need to go.

waste of time and effort. If FSD ever works, this will be pointless.

By the way that's exactly what anyone who ever had an accident after falling asleep at the wheel said.

Tam

Well-Known Member

It's hard to predict what Tesla will do in the future but currently, Tesla only monitors drivers in modes that have Autosteer like Autopilot, EAP, FSD, FSD beta......Tesla monitors drivers for FSD and perhaps Autopilot and if you don't comply, they shut it off. That's fine. So does Chevrolet and Cadillac. No concern to me. BUT, Tesla reportedly is about to do the same to ALL driving...

You are right that Tesla will monitor in non-Autopilot modes as well such as cruise control and manual driving. Currently, Tesla only shuts down automation such as FSD beta, FSD, EAP, and Autopilot... but not manual driving. It is reasonable to assume that Tesla will monitor manual driving because it might plan to shut manual driving down due to safety concerns, but there's no evidence either way.

Other manufacturers only shut down the automation system as a penalty but never manual driving. Who knows? Tesla has been a pioneer so it is reasonable to suspect that Tesla might lead the way on manual driving shutdown even though the possibility is remote.

The problem with Tesla is it's just like its pricing: unpredictable from day to day.

One minute you have a radar in your car, the next minute the Service Center would physically remove it when you come in for an unrelated issue like tires...

Tesla Is Physically Removing the Model S' Radar Sensors

Several Tesla owners complained that Tesla Service Center is physically removing radar sensors from their Model S as Tesla Vision renders them obsolete

Other manufacturers are more consistent. It stays once you buy your radar, and no one will take it away from you.

I bought 3 Tesla cars so far but that's why I bought a fourth EV as a Lucid for consistency instead of another Tesla for more unpredictability. Lucid monitors the drowsy driver in its ADAS mode, but you can turn the alert off in the configuration menu. Turning the drowsy alert monitor on gives you alerts but does not shut down the ADAS (Autosteer and Cruise Control). It only escalates the shutting down process if you fail to respond to steering torque warnings. So Lucid allows you to drive drowsy (quietly without alerts or noisily with alerts depending on how you set your configuration) as long as the drowsy driver complies with the steering torque requirement.

Last edited:

Nice to see a few helpful posts nowThe tesla wall connector is supposed to be hardwired

. As for hardwiring the wall connector, that's only necessary if you are drawing 48 amps off a 60 amp breaker. I installed a 40 breaker and set my WC to charge at 32 amps which is fine on a 14-50 plug. It also passed inspection by the electrical authority.

lencap

Member

I understand the desire for "leave me alone and let me drive", but I also appreciate the intent behind monitoring drivers for attentiveness.

The link below, dated February, 2022, speaks to driver attentiveness at different times of the day, and among a few different car models, including a Tesla Model 3, Cadillac Escalade, Subaru Forrester, and Hyundai Santa Fe. Some of the parameters from the test are these (direct quotes):

Three 2021 model year vehicles, the Cadillac Escalade, Subaru Forester, and Hyundai Santa Fe, and the 2020 Tesla Model 3 were tested by AAA over two days for 10-minute segments along a 24-mile loop of a limited-access toll road in California.

The Escalade, equipped with “Super Cruise,” and the Forester with “EyeSight” and “DriverFocus” are categorized by AAA as direct ADAS with driver-facing infrared cameras. The Santa Fe, with “Highway Driving Assist,” and no driver-facing camera as well as the Model 3 with “Autopilot” and a cabin camera not used for driver monitoring are categorized as indirect ADAS.

This is the link to the study: AAA study finds all ADAS-equipped vehicles should have driver-facing cameras for distraction detection

The interesting part of the study, which is a joint effort initiated by AAA, is also commented upon (direct quote from study):

A Feb. 1 Consumer Reports article states that it along with the National Transportation Safety Board, the Insurance Institute for Highway Safety (IIHS), and the European New Car Assessment Program have found that when automation, such as adaptive cruise control and lane assist, are engaged at the same time drivers are more likely to not pay attention to their surroundings. About 50% of new vehicle models allow drivers to engage adaptive cruise control and lane-centering at the same time, according to an analysis by Consumer Reports last fall. Because of that correlation, they believe ADAS features should always be paired with driver monitoring using computers and cameras to keep an eye on attentiveness.

The point of the study, apparently, is that when drivers engage any type of automated driving assistance they tend to pay less attention and are less aware of immediate traffic situations, many of which, as the study points out, may require immediate interaction by a real person driving. For that reason the study concludes that some type of driving monitoring system is appropriate.

In 2017 my wife and I visited the Fremont Tesla site and I had a very interesting 20 minute conversation with a Tesla employee. I learned that he was a dual degreed PhD engineer/Doctor of Divinity, and his official title was "ethicist". His job at Tesla was to "be a neutral arbitrator for the 'greater good'". I asked him to describe his job. He told me that Tesla hired him, and many others on the team covering a wide range of specialties, to determine "programming rules based upon all aspects of the greater good and responsibility for protecting Tesla's customers".

For example, in a situation where the Tesla automated driving system determines that an accident is unavoidable, what is the correct response? And how will it, if at all, change as technology changes? He explained it like this: The Tesla you are driving is about to be in an accident with serious potential for harm to the occupants of the Tesla AND to the other drivers in different cars. Should Tesla minimize the potential for harm to the Tesla owner, or attempt to minimize overall potential harm across all drivers? For example, what it the accident would likely be a multi-car event, and the Tesla cameras realize that the cars involved are smaller less structurally sound vehicles. What is the priority? Keep in mind all of these decisions become part of the code/programming for FSD/AutoPilot and other Tesla products.

Taking it a step further, what happens when FSD capability improves so that car to car communication in real time is possible? We are in the early stages of FSD in the industry, with Mercedes Benz certified as Level 3 (Conditional Automation) using "Drive Pilot". That stage is "conditional automation". What happens when cars routinely provide Level 4 - (casually defined as "the car drives, you ride") High Automation, and eventually Level 5 "Fully Automated Driving".

If you are a solo Tesla passenger and the car you are about to impact is occupied by a large family, or elderly passengers, does that effect the way the car should act? He went on to describe an incredible array of circumstances, all of which need to be considered, evaluated and either incorporated into FSD or not.

He emphasized that he had no solution at the time, nor was one imminent, but I was beyond impressed that Tesla assembled and hired such a team to evalutate the way they should view and prioritize these aspects.

So, to finally answer the OP's question, I guess there is no single answer, just a probability distribution of outcomes, some of which will invariably involve driver attentiveness and response times, to create a flexible "risk minimization" evaluation of possible outcomes. And my assumption is that whatever is initially adopted will morph over time to include newer technology that allows for deeper evaluation of risk and attention.

Incidentally, it's interesting that most manufacturers have some type of driver monitoring system as a default in their self driving features, whether or not they are in an EV or ICE.

The link below, dated February, 2022, speaks to driver attentiveness at different times of the day, and among a few different car models, including a Tesla Model 3, Cadillac Escalade, Subaru Forrester, and Hyundai Santa Fe. Some of the parameters from the test are these (direct quotes):

Three 2021 model year vehicles, the Cadillac Escalade, Subaru Forester, and Hyundai Santa Fe, and the 2020 Tesla Model 3 were tested by AAA over two days for 10-minute segments along a 24-mile loop of a limited-access toll road in California.

The Escalade, equipped with “Super Cruise,” and the Forester with “EyeSight” and “DriverFocus” are categorized by AAA as direct ADAS with driver-facing infrared cameras. The Santa Fe, with “Highway Driving Assist,” and no driver-facing camera as well as the Model 3 with “Autopilot” and a cabin camera not used for driver monitoring are categorized as indirect ADAS.

This is the link to the study: AAA study finds all ADAS-equipped vehicles should have driver-facing cameras for distraction detection

The interesting part of the study, which is a joint effort initiated by AAA, is also commented upon (direct quote from study):

A Feb. 1 Consumer Reports article states that it along with the National Transportation Safety Board, the Insurance Institute for Highway Safety (IIHS), and the European New Car Assessment Program have found that when automation, such as adaptive cruise control and lane assist, are engaged at the same time drivers are more likely to not pay attention to their surroundings. About 50% of new vehicle models allow drivers to engage adaptive cruise control and lane-centering at the same time, according to an analysis by Consumer Reports last fall. Because of that correlation, they believe ADAS features should always be paired with driver monitoring using computers and cameras to keep an eye on attentiveness.

The point of the study, apparently, is that when drivers engage any type of automated driving assistance they tend to pay less attention and are less aware of immediate traffic situations, many of which, as the study points out, may require immediate interaction by a real person driving. For that reason the study concludes that some type of driving monitoring system is appropriate.

In 2017 my wife and I visited the Fremont Tesla site and I had a very interesting 20 minute conversation with a Tesla employee. I learned that he was a dual degreed PhD engineer/Doctor of Divinity, and his official title was "ethicist". His job at Tesla was to "be a neutral arbitrator for the 'greater good'". I asked him to describe his job. He told me that Tesla hired him, and many others on the team covering a wide range of specialties, to determine "programming rules based upon all aspects of the greater good and responsibility for protecting Tesla's customers".

For example, in a situation where the Tesla automated driving system determines that an accident is unavoidable, what is the correct response? And how will it, if at all, change as technology changes? He explained it like this: The Tesla you are driving is about to be in an accident with serious potential for harm to the occupants of the Tesla AND to the other drivers in different cars. Should Tesla minimize the potential for harm to the Tesla owner, or attempt to minimize overall potential harm across all drivers? For example, what it the accident would likely be a multi-car event, and the Tesla cameras realize that the cars involved are smaller less structurally sound vehicles. What is the priority? Keep in mind all of these decisions become part of the code/programming for FSD/AutoPilot and other Tesla products.

Taking it a step further, what happens when FSD capability improves so that car to car communication in real time is possible? We are in the early stages of FSD in the industry, with Mercedes Benz certified as Level 3 (Conditional Automation) using "Drive Pilot". That stage is "conditional automation". What happens when cars routinely provide Level 4 - (casually defined as "the car drives, you ride") High Automation, and eventually Level 5 "Fully Automated Driving".

If you are a solo Tesla passenger and the car you are about to impact is occupied by a large family, or elderly passengers, does that effect the way the car should act? He went on to describe an incredible array of circumstances, all of which need to be considered, evaluated and either incorporated into FSD or not.

He emphasized that he had no solution at the time, nor was one imminent, but I was beyond impressed that Tesla assembled and hired such a team to evalutate the way they should view and prioritize these aspects.

So, to finally answer the OP's question, I guess there is no single answer, just a probability distribution of outcomes, some of which will invariably involve driver attentiveness and response times, to create a flexible "risk minimization" evaluation of possible outcomes. And my assumption is that whatever is initially adopted will morph over time to include newer technology that allows for deeper evaluation of risk and attention.

Incidentally, it's interesting that most manufacturers have some type of driver monitoring system as a default in their self driving features, whether or not they are in an EV or ICE.

did you attached a plug to the end of your wall connector? thats so strange... i suppose its a mobile wall connector now lolNice to see a few helpful posts now

. As for hardwiring the wall connector, that's only necessary if you are drawing 48 amps off a 60 amp breaker. I installed a 40 breaker and set my WC to charge at 32 amps which is fine on a 14-50 plug. It also passed inspection by the electrical authority.

Similar threads

- Replies

- 40

- Views

- 3K

- Replies

- 7

- Views

- 2K

- Solved

- Replies

- 4

- Views

- 320

- Replies

- 2

- Views

- 448