I think there's a bit of a logical fallacy in the discussions of edge cases like snow and fog conditions. It's a familiar scenario that people take risks driving in such bad and low visibility conditions. You kind of make a bet, knowing you might lose, but most of us have done it before and have stories with usually less than tragic consequences.

It's pretty easy to find YouTube videos of traffic sliding around on icy roads, cars in Seattle spontaneously sliding down the street with a domino effect, and so on. Less common would be collisions due to low visibility in fog (or here in the desert, opaque dust storms or flash-flooded street crossings that can hide a huge washed-out ditch).

Human nature is that we often forge ahead even when we understand that it's risky. Places to go, errands to run, need to get to the school or to the house. If we were to cancel the the plan and it turned out that everyone else made it fine, it would be embarrassing to explain. If we slide the car off the road or have a fender bender in the fog, it's mostly just life experience, and we're limited in harsh judgment of ourselves or our friends.

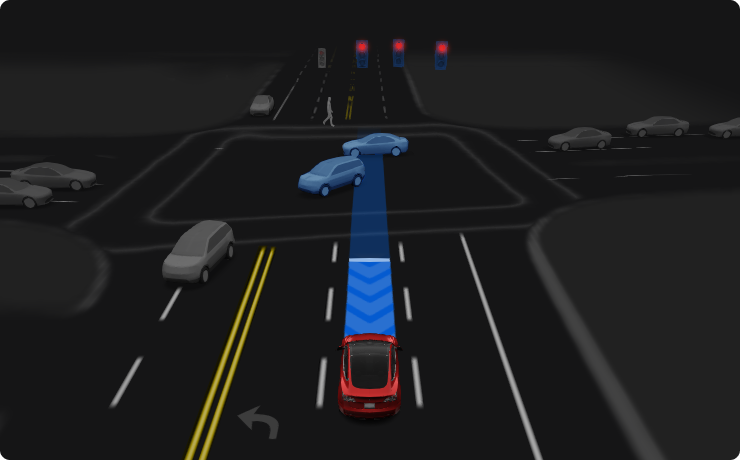

But - if Tesla FSD, or a robotaxi, takes this kind of risk and fails, there'll be very little understanding and mountains of hysteria. Nationwide coverage. This is kind of obvious, yet we have repeated forum challenges of how Tesla FSD can't be good unless it does take those risks and attempts to operate in situations that you and do (but really shouldn't).

In my view, this is yet another aspect of why true L5 is not only an extremely difficult goal but actually impossible, if we demand the machine conquer all these human driving examples that actually involve high risk. It doesn't matter whether humans usually or nearly always get away with it. That standard won't survive the first post-fatality investigation of the robot driver. Actually, it won't even survive the ire of a disgruntled forum user who busted a rim or smacked into a pole.

I do wish everyone would keep this in mind when they spin up edge cases that FSD "won't ever be able to do". If you think about it sensibly, you don't want it even to try those things with you or your family, or your shiny Tesla.

P.S. this point has nothing to do with excusing Tesla from criticism over design engineering or feature release decisions, e.g. camera cleaning or camera placement. Nor is it an attack on sensible discussion about the car's capability in adverse conditions. I'm pointing out the contradiction of seeking human-like risk-taking (including very questionable circumstances) while also demanding superhuman safety results.