Minor release posing as a major release.

The Autopilot team has multiple groups and even within the neural network portion, there's probably at least 2 for the various top level networks: Objects, Lanes, Occupancy. One of the major architectural changes in 10.69(.0/1/2) was the new video occupancy network that FSD Beta now uses to respond to arbitrary things in the road. Those focusing on Objects, which used to be the primary neural network in 2021, were working on improvements in parallel and now it's ready as part of 10.69.3.

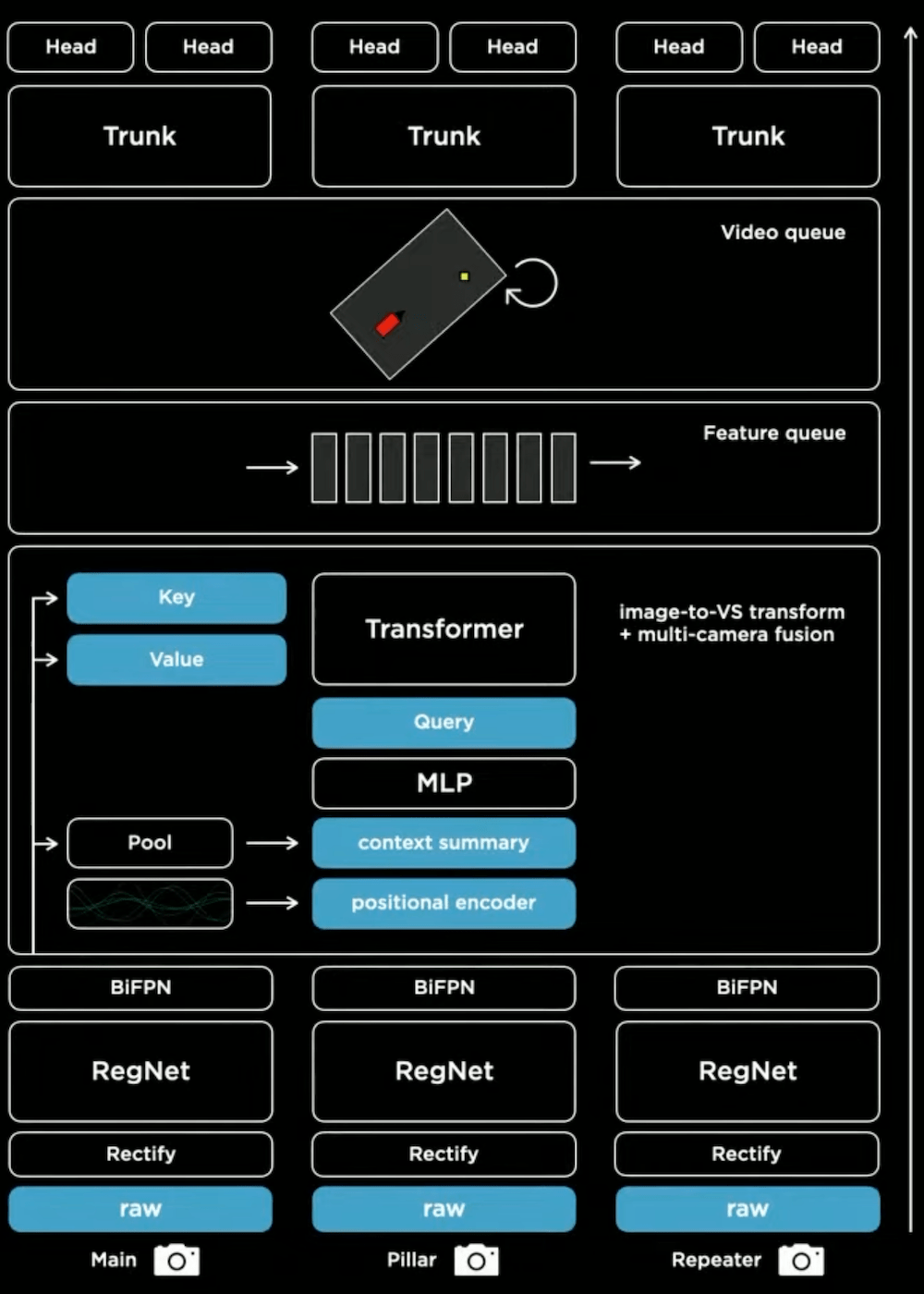

Here's how things were looking for the

Tesla Vision plan back at AI Day 2021:

Even something as "simple" as using raw camera photon counts was desired over a year ago, and only now with 10.69.3 has it become ready for FSD Beta testing after many months of practical engineering optimizations throughout the whole stack:

- Upgraded the Object Detection network to photon count video streams and retrained all parameters with the latest autolabeled datasets (with a special emphasis on low visibility scenarios). Improved the architecture for better accuracy and latency, higher recall of far away vehicles, lower velocity error of crossing vehicles by 20%, and improved VRU precision by 20%.

(To be clear, FSD Beta 10.9 from January introduced using 10-bit photon count streams for the relatively new generalized static object network -- now known as occupancy network, so those learnings and underlying capability allowed for Objects network to make the change.)

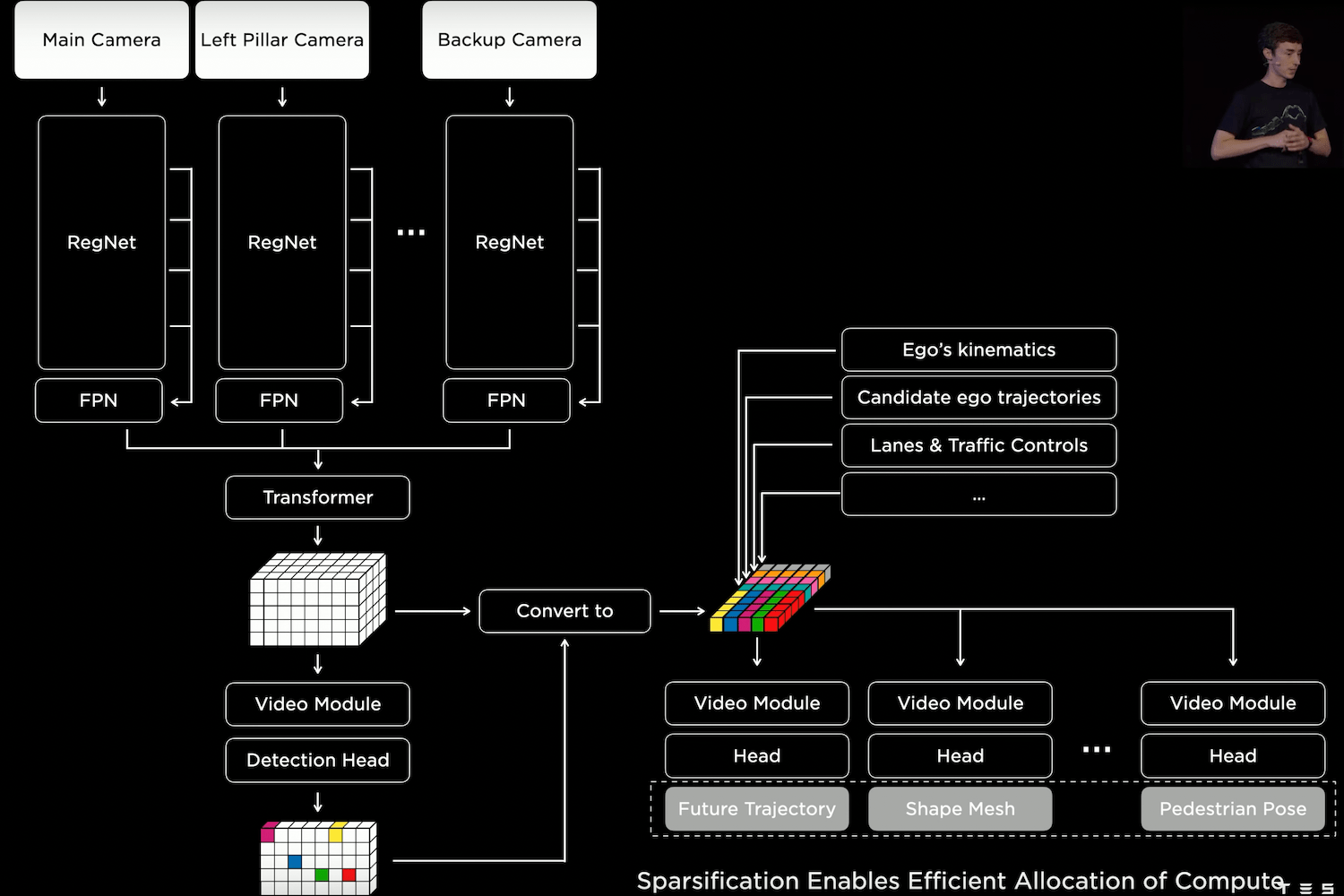

Also for the Objects network was

AI Day 2022's introduction of the 2-stage / 2-phase split where this sparsification step enables efficient allocation of compute for real-time driving requirements:

- 10.69: Upgraded to a new two-stage architecture to produce object kinematics (e.g. velocity, acceleration, yaw rate) where network compute is allocated O(objects) instead of O(space). This improved velocity estimates for far away crossing vehicles by 20%, while using one tenth of the compute.

- 10.69.3: Converted the VRU Velocity network to a two-stage network, which reduced latency and improved crossing pedestrian velocity error by 6%.

- 10.69.3: Converted the NonVRU Attributes network to a two-stage network, which reduced latency, reduced incorrect lane assignment of crossing vehicles by 45%, and reduced incorrect parked predictions by 15%.

On the surface, the Objects network is basically doing the same thing of taking camera inputs and outputting the same set of attribute predictions, but it required some serious practical re-engineering / refactoring to get it ready for wider deployment worth of a "major release." Not only is accuracy improved for safer city streets driving necessary for "wide release" without Safety Score requirements; but also latency and compute requirements are significantly improved for driving with single-stack at 80mph+.