Fernand

Active Member

I tap the screen somewhere in there, but who knows - I often get Profiles, and Sentry, and Dashcam. Sometimes I NOA to Burger King. Yum.

Last edited:

You can install our site as a web app on your iOS device by utilizing the Add to Home Screen feature in Safari. Please see this thread for more details on this.

Note: This feature may not be available in some browsers.

I most often get the profile.I tap the screen somewhere in there, but who knows - I often get Profiles, and Sentry, and Dashcam. Sometimes I NOA to Burger King. Yum.

Couldn't have said it better myself. I've used autopilot extensively for 7 years and it NEVER takes over and does not let the driver assume control. Another good point here is that you quickly get to know what the software can and can't do well and act according. I've had 2 occasions where AP got confused in double turn lanes which required me to take over. Tesla is very clear that this is beta code. Sure hope that people who don't get that don't ruin it for those of us that do.Couldn’t agree more.

ANYONE using it KNOWS (or should) it’s inherent potential for danger/endangering in certain situations. While it often does much right…

as we’ve all been told…

it may do exactly the wrong thing at exactly the wrong time.

We CHOOSE to use it in Beta.

You don’t get to do that and then not own it when you should have moved the car out of danger yourself

or from the trajectory of what was going to likely be a problem, if no intervention taken.

I call BS in this account not being dead bang accurate of what occurred and what driver should have likely done.

Couldn't have said it better myself. I've used autopilot extensively for 7 years and it NEVER takes over and does not let the driver assume control. Another good point here is that you quickly get to know what the software can and can't do well and act according. I've had 2 occasions where AP got confused in double turn lanes which required me to take over. Tesla is very clear that this is beta code. Sure hope that people who don't get that don't ruin it for those of us that do.

There is a MAJOR difference between being fallible about a timeline or even fudging it and knowing an important objective fact and lying about it.Correct. Solved problem. Two weeks. These are words.

Plausible deny abilityThere is a MAJOR difference between being fallible about a timeline or even fudging it and knowing an important objective fact and lying about it.

Here is an interesting Tweet from Musk. Does that mean this accident submitted to NHTSA was proven as fake?

If FSD has any liability, it must be an "FSD accident".

Clearly, spin. Nothing to see here.

Honesty yes. If the owner thought he had fsd enabled but he actually didn’t, then the fsd beta would be a contributing factor in the crash. If he didn’t have fsd beta he wouldn’t think it was engaged.So you're saying that just by virtue of the driver having FSD Beta on their vehicle, it's an FSD accident? Because the driver could at any point mistakenly believe it's enabled? That's nonsense.

I hope this is an autocorrect mistake. Otherwise it's going on /r/BoneAppleTea/Plausible deny ability

Ship it.I hope this is an autocorrect mistake. Otherwise it's going on /r/BoneAppleTea/

We all have experienced FSD getting disengaged because we held the wheel a tad tight. So, to say someone tried to correct the steering but FSD wouldn’t allow that is difficult to believe. Just like that “no one was in the driver seat” accident was difficult to believe.Given the language in the NHTSA report, it's clear the driver was very confused about what mode their vehicle might have been in. So I think it's likely the accident happened, but not while FSD Beta was engaged.

Especially the "but the car by itself took control and forced itself into the incorrect lane" makes it sound like an emergency lane departure maneuver, which wouldn't have happened if FSD Beta was engaged.

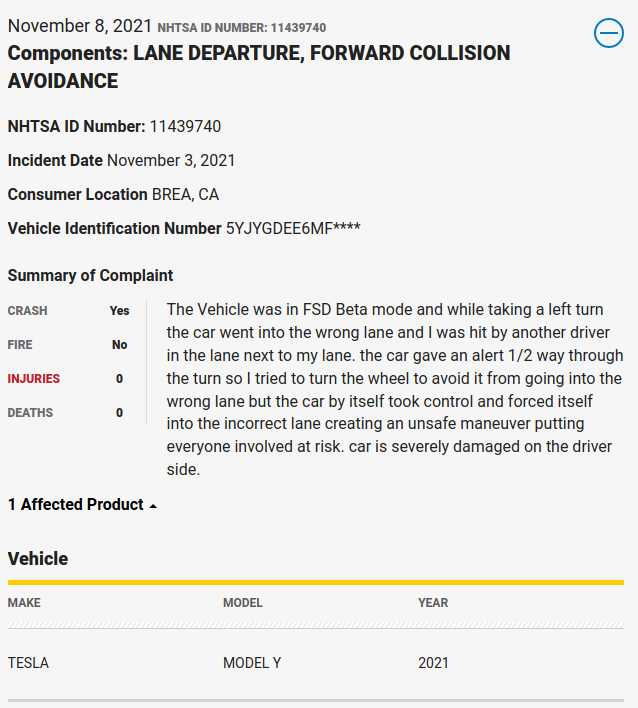

What worries me is that this might be, the “dual disengage” problem I have pointed out to Tesla and in posts here... in these situations you grab the steering wheel .. which disengages FSD alright, but leaves TACC engaged. And TACC, being dumb, may well cause the car to speed up if it’s not driving at set speed (likely on a corner). IMHO this is bad design.Saw this NHTSA report on Twitter:

I believe there was an accident since we have a report to the NHTSA but the details of what happened sound suspicious to me. If the driver tried to turn the wheel, it should have disengaged FSD and gone back into TACC only where the driver would be in control of steering. FSD Beta does not "by itself take control and force itself". Perhaps it was in manual mode and it was the lane departure assist that "nudged" the car, not FSD Beta? FSD Beta could have missed the turn and gone into the wrong lane and hit another car. We've seen situations like that in FSD Beta videos. Perhaps, the driver was not able to intervene in time. But I don't believe that the driver tried to take over and FSD Beta forced the car to go in the other lane anyway.

I understand your point but don’t see it as an issue to keep TACC engaged when overriding the steering. Generally it would be more unsafe if I take over the steering from FSD / AP and the car suddenly starts slowing down because TACC is also disabled. That would mean every manual disengagement requires me to simultaneously grab the wheel / yoke and press the accelerator just the right amount to maintain my current speed.What worries me is that this might be, the “dual disengage” problem I have pointed out to Tesla and in posts here... in these situations you grab the steering wheel .. which disengages FSD alright, but leaves TACC engaged. And TACC, being dumb, may well cause the car to speed up if it’s not driving at set speed (likely on a corner). IMHO this is bad design.

Now, I consider it more likely the guy reporting the incident was embellishing things to deflect blame (not the first to try such a thing), but I still think grabbing the wheel should disengage ALL AP functions on city streets (but not on highways).