Welcome to Tesla Motors Club

Discuss Tesla's Model S, Model 3, Model X, Model Y, Cybertruck, Roadster and More.

Register

Install the app

How to install the app on iOS

You can install our site as a web app on your iOS device by utilizing the Add to Home Screen feature in Safari. Please see this thread for more details on this.

Note: This feature may not be available in some browsers.

-

Want to remove ads? Register an account and login to see fewer ads, and become a Supporting Member to remove almost all ads.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

FSD Beta 10.69

- Thread starter Buckminster

- Start date

willow_hiller

Well-Known Member

What’s the rollout for .2.3 looking like? Still no luck here getting it.

The pending installations count on TeslaFi has only been going down since yesterday. So only about 15% of the FSDb fleet was selected, and they haven't done a second wave, yet. All new installs are from those that were pending since yesterday.

AlanSubie4Life

Efficiency Obsessed Member

Maybe I missed something but just looks like a normal attempted veer into traffic next to him. This happens all the time. Not a regression.Check out 9:43

Maybe I missed something but just looks like a normal attempted veer into traffic next to him. This happens all the time.

AlanSubie4Life

Efficiency Obsessed Member

It literally tells you it will do this.i love this forum's perception of safe great,

technology. hahahaha

I did not say anything about it being good. I just said it was normal and not a regression (context!).

I’ve been crystal clear about FSD quality.

It is a dot dot revision. It is not going to be better in any appreciable way. Regressions unlikely as well.

Last edited:

willow_hiller

Well-Known Member

It literally tells you it will do this.

I did not say anything about it being good. I just said it was normal and not a regression (context!).

I'm not even sure what exactly is being pointed out as unsafe. You can see the path planner, and it's planning on merging behind the passing vehicle. The passing car was visualized on the screen the entire time, and it was pretty clear there was no chance of a collision.

Sure, it was an abrupt lane change at the last minute before a turn. Surprising, but not unsafe by itself.

Also this guy seems to be a very cautious tester, which is good, but it means you cannot just point out his disengagements as failures of the system. Right after this disengagement, there's a red light, the car is coasting to a stop, a pedestrian starts to cross, she gets highlighted on the screen in blue... and then he disengages anyway. The dude's welcome to disengage whenever he feels uncomfortable, but I wouldn't have disengaged in that situation.

AlanSubie4Life

Efficiency Obsessed Member

I mean, that was pretty poor. Not sure why it didn’t slow earlier and faster. Either that or go through on the yellow! Choose one or the other!Also this guy seems to be a very cautious tester, which is good, but it means you cannot just point out his disengagements as failures of the system. Right after this disengagement, there's a red light, the car is coasting to a stop, a pedestrian starts to cross, she gets highlighted on the screen in blue... and then he disengages anyway. The dude's welcome to disengage whenever he feels uncomfortable, but I wouldn't have disengaged in that situation.

He disengaged probably because he didn’t want to be jerked around and the car was being dumb. Just more comfortable to have control.

Regen is extremely powerful and brings the car to a prompt halt, if it is used. Plenty of time here.

Last edited:

Just a bit more of a report on 69.2.3. This morning, two interventions on the way to work. Admittedly, mostly on interstates, but still. (The car still swings badly to the right on left turns.) That's better than the previous releases where there were five to ten interventions on that path.

Went to lunch with the gang at a place some 7 miles away, local roads all the way: Stop signs, traffic lights, lots of turns, twisty roads. On the way there: No interventions. On the way back: They're doing construction on a road at the work campus. Right lane 1/2 blocked off with cones. And a construction guy in a truck, facing the opposite way to travel, taking up the rest of the road, except for a barely passable strip on the far left. The car freaked out when presented with this, so I took over.

I know, I know: Any error is a fatal error. But, on the way back, I had a techie passenger who wanted to check out this FSD-b stuff and Teslas in general. He didn't get freaked out, even with the (relatively slow) left turn with high-speed, oncoming traffic, although I did warn him that this was a Beta and quoted that stock phrase, "The car will do the wrong thing at the wrong time."

This is beginning.. to look good...

More to do, yes. But better, you betcha.

Went to lunch with the gang at a place some 7 miles away, local roads all the way: Stop signs, traffic lights, lots of turns, twisty roads. On the way there: No interventions. On the way back: They're doing construction on a road at the work campus. Right lane 1/2 blocked off with cones. And a construction guy in a truck, facing the opposite way to travel, taking up the rest of the road, except for a barely passable strip on the far left. The car freaked out when presented with this, so I took over.

I know, I know: Any error is a fatal error. But, on the way back, I had a techie passenger who wanted to check out this FSD-b stuff and Teslas in general. He didn't get freaked out, even with the (relatively slow) left turn with high-speed, oncoming traffic, although I did warn him that this was a Beta and quoted that stock phrase, "The car will do the wrong thing at the wrong time."

This is beginning.. to look good...

More to do, yes. But better, you betcha.

went for first couple of drives with 2022.69.2.3 this morning.

First coming back into my return "torture" test route.

It was significantly better than previously. No longer stopping for yields that it would wait interminably for, still slightly jittery, but it completed them without pauses or crazy stop/slowdowns. Impressed with that.

Second drive was the start of my commute route, 4/5 lane undivided road.

Everything was going well, smooth acceleration, nice cornering etc

That was until it got to the light I needed to turn left at. It has us positioned in the left lane waiting for a gap to get into the dedicated left turn lane.

When it started signaling I misinterpreted it and assumed it was signaling left - but no - it decided that we needed to be in the right lane, 30 feet from the light we had to turn left at. Disengaged and reported it.

I'm rather disappointed that I'm still much better at judging a "regen only" stop than FSDb is.

Oh well.

First coming back into my return "torture" test route.

It was significantly better than previously. No longer stopping for yields that it would wait interminably for, still slightly jittery, but it completed them without pauses or crazy stop/slowdowns. Impressed with that.

Second drive was the start of my commute route, 4/5 lane undivided road.

Everything was going well, smooth acceleration, nice cornering etc

That was until it got to the light I needed to turn left at. It has us positioned in the left lane waiting for a gap to get into the dedicated left turn lane.

When it started signaling I misinterpreted it and assumed it was signaling left - but no - it decided that we needed to be in the right lane, 30 feet from the light we had to turn left at. Disengaged and reported it.

I'm rather disappointed that I'm still much better at judging a "regen only" stop than FSDb is.

Oh well.

swedge

Member

DrChaos,This could be hardware limitations. The cameras are only 1280x960. It just doesn't understand what's happening downroad where humans do. Human foveas are much better and are on smart double-gimbals.

Please check me on this. I am reading that the human eye has about 5 degrees of foveal field of view with a resolution of .02 to .03 degrees. Also I've read that Tesla's front facing camera has a field of view of 35 degrees. If 1280 pixels cover 35 degrees, that is .025 degrees per pixel. If this is correct, the resolution of that Tesla camera is roughly the same as a human eye.

As you say, we can point our high resolution area in any chosen direction, but it is only about 5 degrees wide, while the Tesla has the same high res across its entire field of view.

I looked this up because my FSDβ fails to behave correctly at a nearby three-way stop, where below the stop sign there is a small sign saying "oncoming traffic does not stop." I wondered if the car's camera could resolve that text, or if if simply did not try to read and understand it.

If my resolution info above is correct, then the car can see the sign as well as I can, and this is just a detail Tesla has not dealt with yet. The same would apply to your comment about its distance vision. On the other hand, extending the distance would require more processing to include farther objects and hence more computation, so perhaps there is a processing hardware limitation in play.

I hope that over time the software can be optimized enough to let the car read relevant signs and see farther ahead without yet more hardware improvements. Perhaps it can save processing similar to how we do by pointing our eyes, thus concentrating computation on areas with interesting details.

In any case, it would be good to resolve the camera resolution question, so to speak.

SW

powertoold

Active Member

I don't think fsd beta currently considers road markings. In a lot videos, people will say that the car "sees" that it's a right or left turn lane (in the visualization) but makes a dumb decision despite the road markings.

Right now, Tesla is likely training their lanes network in more of an "end-to-end" approach, so the network consumes the entire scene along with all road markings and then makes predictions about the lane semantics. So the car doesn't actually "see" lane markings in the way humans do. That's why we still experience dumb lane decisions despite the road markings showing up on the visualization.

That's why Tesla has fed its lanes network 1M+ intersections in order to get it to make predictions about all sorts of lane environments. It's definitely a brute force way of solving the problem.

Right now, Tesla is likely training their lanes network in more of an "end-to-end" approach, so the network consumes the entire scene along with all road markings and then makes predictions about the lane semantics. So the car doesn't actually "see" lane markings in the way humans do. That's why we still experience dumb lane decisions despite the road markings showing up on the visualization.

That's why Tesla has fed its lanes network 1M+ intersections in order to get it to make predictions about all sorts of lane environments. It's definitely a brute force way of solving the problem.

willow_hiller

Well-Known Member

I don't think fsd beta currently considers road markings. In a lot videos, people will say that the car "sees" that it's a right or left turn lane (in the visualization) but makes a dumb decision despite the road markings.

Right now, Tesla is likely training their lanes network in more of an "end-to-end" approach, so the network consumes the entire scene along with all road markings and then makes predictions about the lane semantics. So the car doesn't actually "see" lane markings in the way humans do. That's why we still experience dumb lane decisions despite the road markings showing up on the visualization.

Agreed. The "FSD Visualization Preview" you get on HW3 without FSDb clearly identifies and displays turn lane markings. However, all of those visuals are now gone on FSDb. Obviously, the car classifies a lot of things that it doesn't display on the screen, but I don't see why they'd remove those identified lane markings from the FSD Beta visualization if they were using them as inputs.

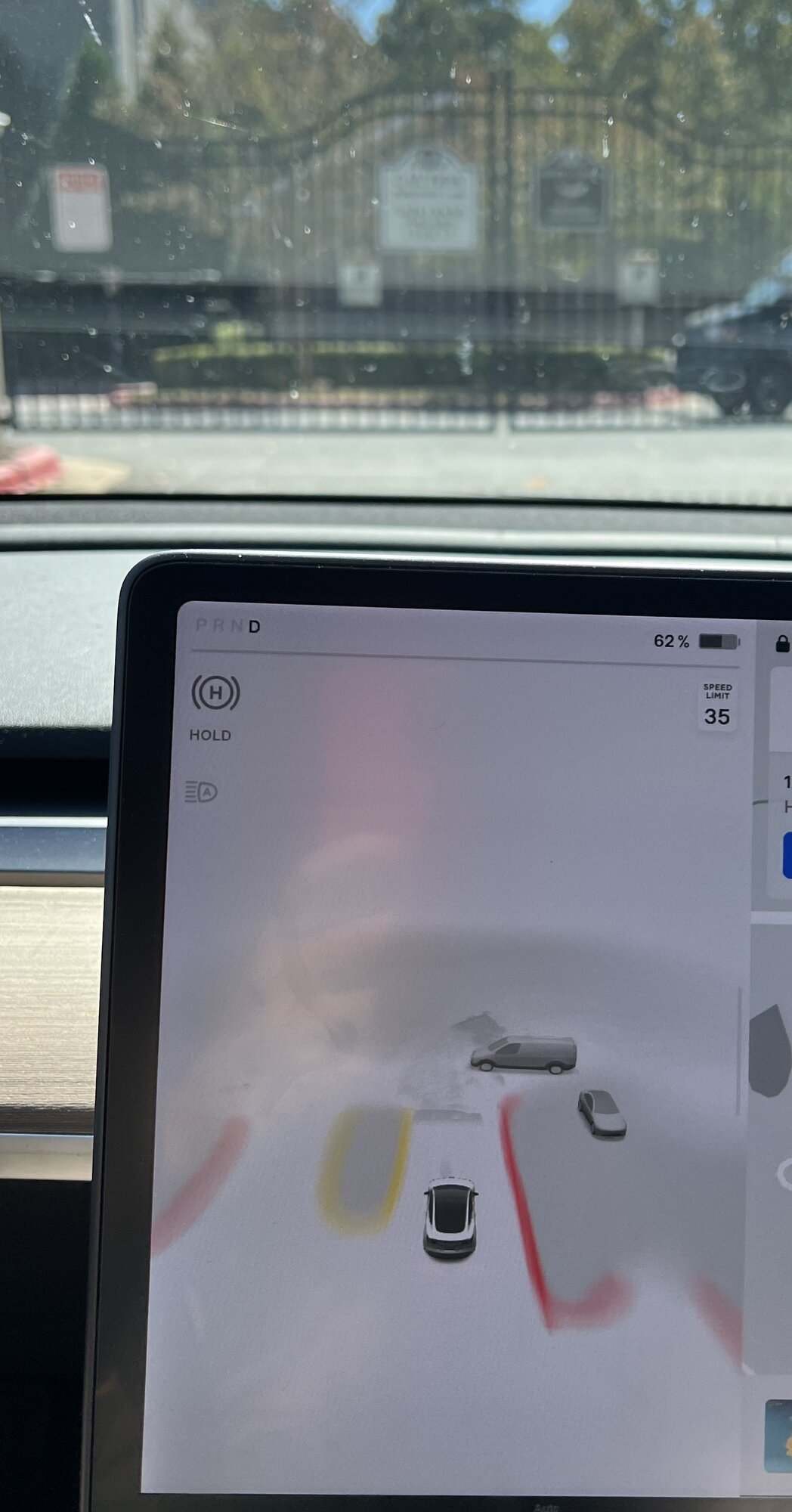

I'm still on 10.69.2.2, but wondering if anyone else has seen something like this with the visuals? I don't remember it displaying the gates to my community before this update. Wondering if this is output of the occupancy network or something else that I missed.

The preview visualizations are still there although FSD Beta seems to also draw white driveable and gray non-driveable surfaces on top of those visualizations. So I agree most of the time the arrows seem to be missing but they can appear such as this right turn and bike lane from a recent 10.69.2.3 video:The "FSD Visualization Preview" you get on HW3 without FSDb clearly identifies and displays turn lane markings

I describe it as drawn over as sometimes you can see things like waste collection carts gradually get progressively more cut off as the preview visualization placed it incorrectly and FSD Beta correctly draws the road surface at the correct elevation.

Yeah, 10.69 Occupancy Network visualizations show things that are in driveable space and AI Day showed gates and garages as brown boxes:I'm still on 10.69.2.2, but wondering if anyone else has seen something like this with the visuals?

Yep, it's ironic how little regen seems to be used.I'm rather disappointed that I'm still much better at judging a "regen only" stop than FSDb is.

Oh well.

Dirty Tesla provided some insights after chatting with Autopilot team at AI Day:I still doubt they prioritize snapshots. I see so many people snapping preferential / comfort / silly issues constantly. If I were Tesla I'd prioritize hard brakes or steering disengages (more likely safety issues)

Hitting the clip button at the top of the screen for reporting things is extremely important to them and gives them a lot of data. Now I don't have any specifics, but let's keep in mind, you should be using that, and I'm going to use it more now that I've heard these things. It helps them a lot with situations that you are having trouble with.

Now to add on to that, maybe there's something you've been reporting -- because I know it's happened to me -- for a long time that's still not fixed, and you're like "well, why am I reporting this if I've been reporting it for a year." However, I've been reporting for almost 2 years at this point that still seem to not be working correctly. It seems like the goal right now / the focus is, as it should be, on safety. So anything that's safety critical is what's going to get attention first -- number one. Things that are more convenience or more just good driving etiquette are a little bit on the back burner -- they're not as much of a focus of the beta right now.

Again, I didn't get any of this specifically. It's not like I asked this exact question and got this exact answer. But in my head how I put it all together: an example is no right on red… the car will still try to turn if that sign is present. The driver can easily just tap the brake; it's not a safety concern -- it's not something where the car is running into somebody. Tesla's not focused on making that work right now because they have other things they are focusing on. Again, take a lot of this with a grain of salt, but these are just the kind of things I've gathered from my discussions there.

And our driving is very helpful for the team, so driving is good. There's nothing specific like you should do this or do this. Just drive, click that report button when you can, when you find things you think need to be reported. And that data does help them.

Although Tesla didn't directly talk about the report button during the presentation, the Data Engine section glossed over how they knew to look for the listed challenging cases: parked vehicles at turns, buses and large trucks, leading stopped vehicle, vehicles on curvy roads, vehicles in parking lots. Maybe it was from explicit feedback that allowed Tesla to query the existing snapshots to get say 100s of initial examples to then ask the fleet to send back potentially 100,000+ examples.

sleepydoc

Well-Known Member

It’s also ironic that I routinely get 230 Wh/mi when using FSD. Not sure how to reconcile these two.Yep, it's ironic how little regen seems to be used.

momo3605

Active Member

Are you driving an SR+? I get 300 Wh/mi on FSD beta. Significantly worse than when I drive myself.It’s also ironic that I routinely get 230 Wh/mi when using FSD. Not sure how to reconcile these two.

Similar threads

- Article

- Replies

- 56

- Views

- 11K

- Replies

- 19

- Views

- 2K

- Replies

- 97

- Views

- 5K

- Replies

- 9

- Views

- 4K

- Replies

- 25

- Views

- 3K